DigiFlow User Guide

Version 4.0, January 2017

© Dalziel Research Partners

2000 – 2017

1 Introduction

DigiFlow provides a range of image processing features

designed specifically for analysing fluid flows. The package is designed to be

easy to use, yet flexible and efficient, and includes a powerful yet flexible

macro language. Whereas most image processing systems are intended for

analysing or processing single images, DigiFlow is designed from the start for

dealing with sequences or collections of images in a straightforward manner.

Before installing or using DigiFlow, please read the Licence

Agreement (see §16) and ensure you have

completed the registration requirements.

1.1 History

The origins of DigiFlow lie in an earlier system by the same

author: DigImage. This earlier system, with its origins in 1988 and first

released commercially in 1992, pioneered many uses of image processing in fluid

dynamics. Utilising its own DOS-extender technology, DigImage existed in the

base 640kB of DOS memory (and later from the command prompt under Windows 3.x

and 9x), accessing around 12MB of extended memory for image storage and

interface with the framegrabber hardware.

To obtain the necessary performance in these early days of

image processing on desktop computers, DigImage required a framegrabber card to

be installed to provide not only image capture, but also image display and some

of the processing. While this close coupling allowed efficient real-time

processing and frame-accurate control of a video recorder, it ultimately

restricted the development and deployment of the technology. The original ISA

bus based Data Translation DT2861 and DT2862 frame grabber cards remained available

until 2001, but by that time suitable motherboards had become difficult to source.

At time of writing (2007) and despite its reliance on outdated technology,

DigImage is still used in many laboratories around the world.

The development of DigiFlow began in 1994, although the

project had a number of false starts and development put on hold a number of

times due to other commitments. The code of this version has its origins in

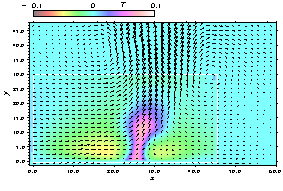

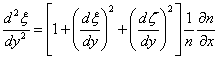

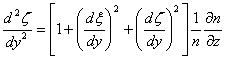

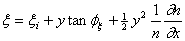

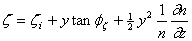

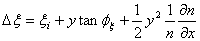

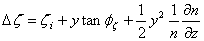

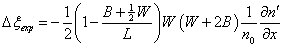

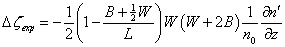

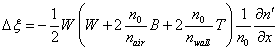

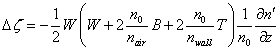

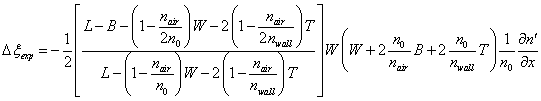

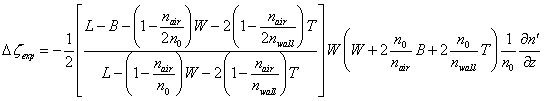

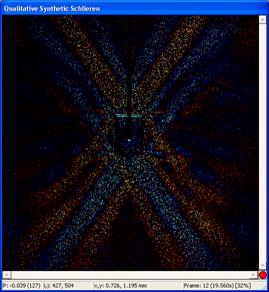

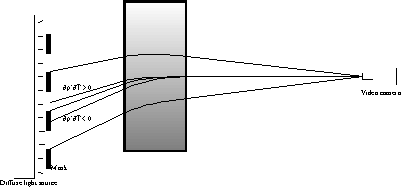

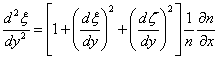

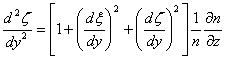

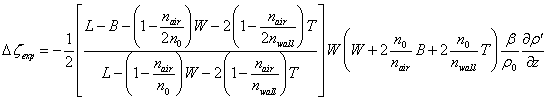

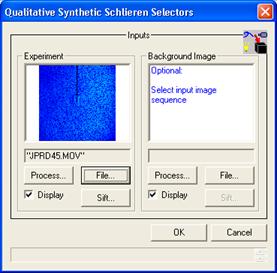

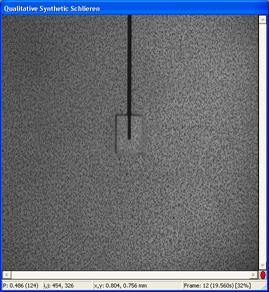

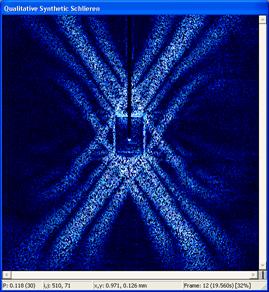

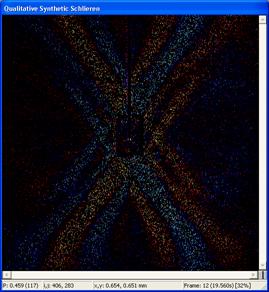

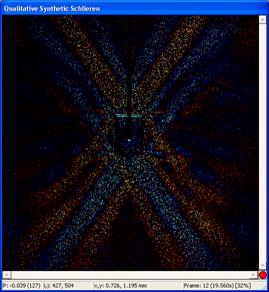

1997 as part of the development of synthetic schlieren (see §5.6.4). The computational and resolution requirements for

synthetic schlieren could not be accommodated efficiently within the framework

of DigImage.

Despite sharing many approaches, algorithms and techniques,

DigiFlow does not re-use any of DigImage’s 8Mbytes Fortran 77 and 2MB Assembler

source code. The design goals for power, flexibility and efficiency in DigiFlow

could only be achieved by starting again from scratch.

DigiFlow builds on experience with DigImage from the user

view point to provide a more powerful, more flexible, but simpler interface. It

also builds on the programming experience to provide a more flexible, powerful

and maintainable code base (now in excess of 15MB of source).

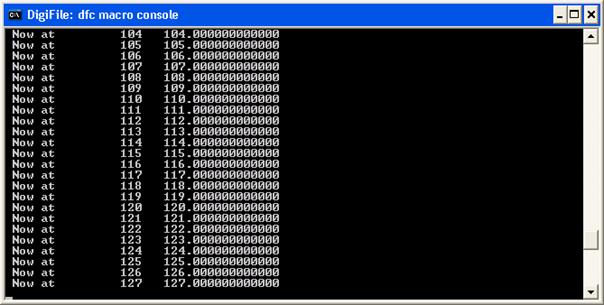

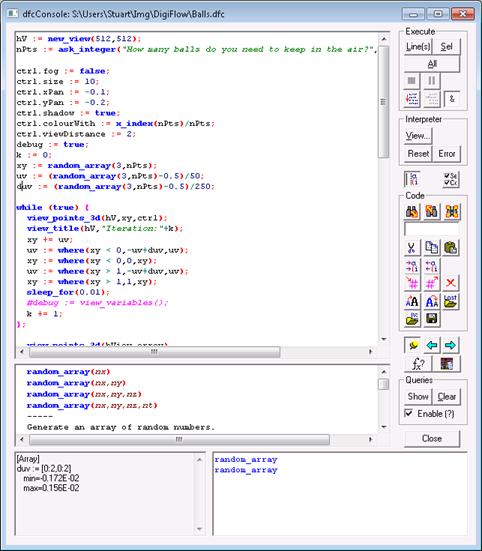

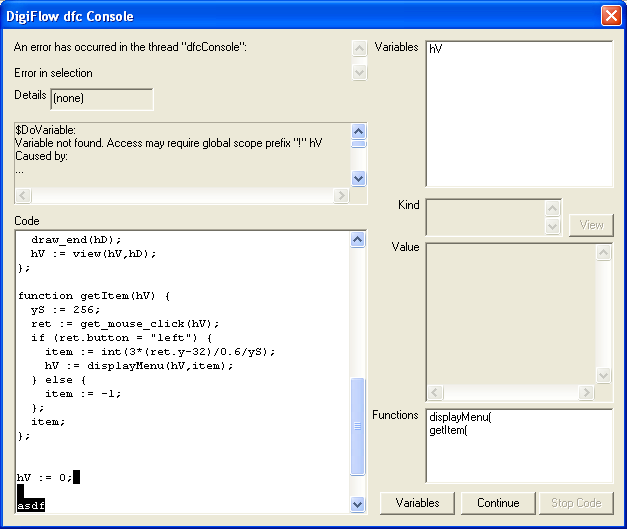

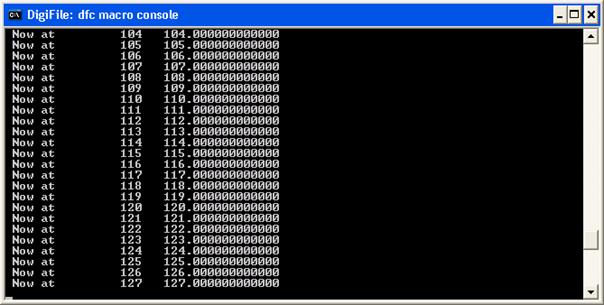

A central feature of DigiFlow is a powerful macro language (dfc) and interpreter.

This provides users with an efficient and flexible environment in which to

automate and customise processing, as well as proving to be a very useful

general computational and plotting tool.

Versions of DigiFlow have been in use in Cambridge since

2000, and at other selected laboratories since 2002. Its wider dissemination

began in late 2003 with a series of beta releases. The first commercial release

(version 1.0) dates from February 2005, with parallel processing and other technologies

providing substantial speed increases being introduced with version 2.0 during

2007. Version 3.0, released in 2008, provides further performance improvements

plus a wealth of new processing features.

1.2 Key features

DigiFlow has been designed from the outset to provide a powerful

yet efficient environment for acquiring and processing a broad range of

experimental flows to obtain both accurate quantitative and qualitative output.

Central to design philosophy is the idea that an image

stream may be processed as simply as a single image. Image streams may consist

of a sequence of images (e.g. from a ‘movie’), or a collection of images

related in some other manner.

Efficiency is obtained through the use of advanced

algorithms (many of them unique to DigiFlow/DigImage) for built in processing

options.

Power and flexibility are obtained through an advanced fully

integrated macro interpreter (using DigiFlow’s dfc macro language) providing a similar

level of functionality to industry standard applications such as MatLab. This interpreter is available to the user either to directly run macros, or as

part of the various DigiFlow tools to allow more flexible and creative use.

Commercial versions of DigiFlow include additional features such as partial

compilation to further improve performance.

Although not an essential component, DigiFlow retains the

potential DigImage released by the control of a framegrabber. Not only does

this greatly simplify the process of running experiments, acquiring images,

processing them, extracting and plotting data, but it also enables real-time

processing of particle streaks and synthetic schlieren, for example.

1.3 User guide

This User Guide is designed to provide the primary reference

for DigiFlow. The User Guide is supplied in both .html and .pdf formats and is linked to the help

system within DigiFlow. Pressing the F1 function key within DigiFlow will start a web browser

and take you to the most appropriate point in the .html version of the User Guide.

The User Guide is not in itself complete: detailed

descriptions of the many functions provided by the macro interpreter may be

found in the interactive help system (Help: dfc Functions). The User Guide is also

supplemented by a variety of scientific publications that expand on some of the

underlying technologies.

The typographical convention used in the User Guide is

described below:

|

Typography

|

Description

|

|

Analyse

|

Windows elements such as prompts, menu items and dialogs.

|

|

Expt_A.dfi

|

File names, etc.

|

|

read_image()

|

Interpreter commands and functions.

|

|

:=

|

Interpreter operators and syntax.

|

|

"string"

|

Interpreter operators and syntax.

|

|

|

Formal argument names for interpreter functions.

|

|

my_image

|

Variables, numbers, etc., for the interpreter.

|

|

file0

|

Formal argument names for interpreter functions.

|

2 Installation

Although DigiFlow will work on any Windows XP or later

machine, we recommend that you avoid using Windows Vista if possible as the

performance of Vista is significantly worse than either Windows XP or Windows

7. There are versions of DigiFlow that can operate under both 32-bit and 64-bit

implementations, although at present it cannot control a digital video camera

under a 64-bit implementation of Windows.

2.1 Basic

installation

DigiFlow is a typical Windows application with a graphical

user interface, menus, dialog boxes and toolbars. However, unlike many

applications, DigiFlow does not require a special installation procedure, but

can simply be copied to the desired directory. In most cases DigiFlow will be

delivered in a .zip

or self-extracting (.exe)

archive file, downloaded from the web. This should simply be unzipped into your

selected directory. However, to make the best of DigiFlow, there are some additional

settings and tasks to be completed. The setup.bat file that is copied to the

installation folder will help with this process. Refer to GettingStarted.pdf for further details.

The installed part of DigiFlow consists of DigiFlow.exe, which

contains the core functionality, and a range of DLL files that handle specific

menu options. DigiFlow also makes use of various global start-up files stored

in the same directory.

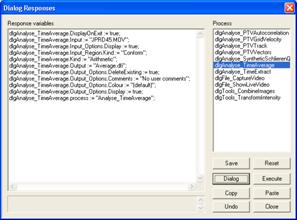

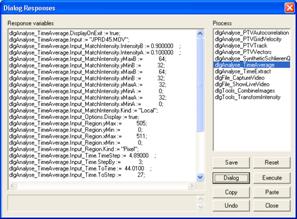

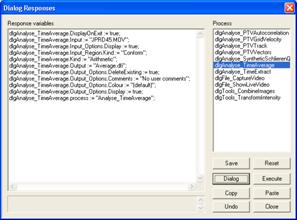

During use, DigiFlow generates two status files in the

directory in which it is started. These are DigiFlow_Status.dfs (§13.5), which contains a range of information describing

the settings, and DigiFlow_Dialogs.dfs

(§13.4), which records your last responses to many

of the prompts, etc. By storing this information in the directory in

which DigiFlow is started, DigiFlow is able to keep a separate set of

information for each user, or for each specific task, without polluting the

registry. Additionally, these status files can be deleted or moved as the user

wishes. In some circumstances, DigiFlow_Status.dfs

may become corrupted. If DigiFlow fails to start, or exhibits unexpected

behaviour, you should try removing (or renaming) DigiFlow_Status.dfs to see if this cures

the problem.

It is recommended that you use a new directory for each new

set of experiments and for each new project. In this way the DigiFlow strategy

of storing localised status files will facilitate use of DigiFlow in the

various different contexts. In such an environment it is frequently most

convenient to start DigiFlow from the command prompt within the appropriate

directory structure, although other strategies such as multiple shortcuts or

setting up associations for Windows Explorer are also possible.

If you wish to run DigiFlow from a command prompt (strongly

recommended), it is worth putting this directory on the path so that DigiFlow

may be started by simply typing DigiFlow at the prompt (DigiFlow will normally add itself

to the search path the first time it is run to enable this). If you prefer to

start DigiFlow from the desktop or start menu, you will need to create a

shortcut at that point and set the Start in directory appropriately. It is strongly

recommended that you do not run DigiFlow from the directory in which the

program resides, exept during the set-up procedure.

2.2 DigiFlow

onfiguration

Details of the basic setup and configuration of DigiFlow

under Windows is covered in GettingStarted.pdf.

This section reiterates some of the key points and highlights other

considerations that may facilitate your use of DigiFlow. Note that DigiFlow can

also be installed to run under Wine on a Linux machine, although it is not

possible to control a digital video camera and .eps (Encapsulated PostScript) files do

not have access to the normal range of fonts and appear visually less

satisfying.

2.2.1 Basic

configuration

Specification of the file extension for file names within

DigiFlow is mandatory in most circumstances as DigiFlow utilises this extension

to determine the file type for output. However, by default, Windows XP and

later hide the extensions to files of known types, a feature that can cause

problems with DigiFlow. We recommend, therefore, that you turn off this

feature. DigiFlow will attempt to do this for itself, but this may not work on

some systems. If DigiFlow does not make all extensions visible automatically,

then you may achieve this manually through the View tab of Tools: Folder Options under Windows Explorer.

Simply remove the check mark from Hide extensions for known file types. Note that this will

need to be done for each DigiFlow user.

By default, DigiFlow will not be associated with any file

types or extensions, unless you install it using setup.bat (in which case .dfc, .dfd, .dfi, .dfm, .dfs and .dft will be

associated with DigiFlow). The easy way to make or add such associations is to

right-click on a file with such an association then select Open with (or Open if Open with is not visible) and choose the

default program from the Open

With dialog and check the Always use… box. If DigiFlow is not

listed in this dialog, then locate it using the Browse button.

Figure 1: The Open With dialog for selecting the default program.

We recommend that the following extensions are associated

with DigiFlow on all installations: .dfc, .dfd,

.dfi, .dft and .dfs. You may also

wish to set up associations for other standard image formats such as .bmp, .tif, .png and .jpg.

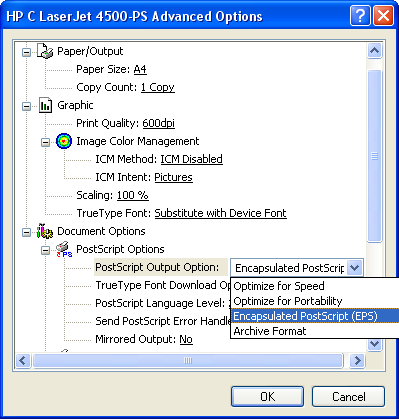

2.2.2 Encapsulated PostScript configuration

DigiFlow can create Encapsulated PostScript (.eps) files from image

and graphical output for incorporation into documents in packages such as LaTeX

and Word. This can be achieved either through DigiFlow’s inbuilt .eps facility, or

using a Windows printer driver. The former is restricted to bit images (or a

rasterised version of graphics), whereas the latter can produce both bit image

and vector graphics.

By default, DigiFlow searches for a printer named EPS to use to create

the .eps files.

Creation of this printer is relatively straight forwards. Start the Add Printer Wizard

from the Printers and faxes

window, selecting Local

printer attached to this computer and using the File: (print to file)

port. Select a PostScript printer driver (we recommend the HP C LaserJet 4500-PS

if you are using Windows XP, or the Xerox Phaser 6120 PS if you are using

Windows 7) and name the printer “EPS”. (You do not want to make this the

default printer, you may, however, wish to share the printer to simplify the

setting up of further machines.) For Windows Vista, it is recommended that you

download an Adobe PostScript driver from www.adobe.com

as some of the drivers distributed with Windows Vista format their PostScript

in a manner that inhibits the use of LaTeX packages such as psfrag.

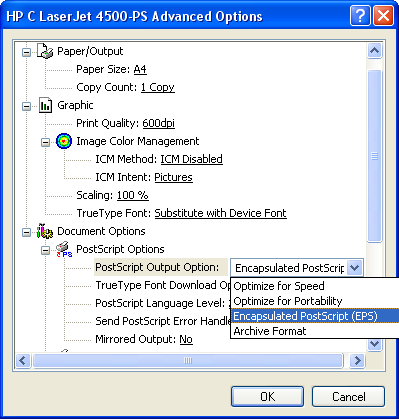

Once the wizard has finished, right-click on the new EPS printer and select

Printing preferences.

Click on the Advanced

button expand Document Options

and PostScript Options

within it. Under PostScript

Output Option select Encapsulated PostScript (EPS), as indicated in figure 2.

Figure 2: Encapsulated

PostScript (.eps)

printer setup.

Note: if you are using Remote Desktop to access the computer

with DigiFlow installed, you are best to disable the feature making local

printers available to the remote session as this can cause problems if the EPS

printer exists on your local machine.

DigiFlow cannot itself read back in an Encapsulated

PostScript file it produces. However, if DigiFlow detects that GhostScript is

installed on the machine, then DigiFlow will attempt to use GhostScript to help

it load the .eps

file in an appropriate format. For this to be achieved, then GhostScript must

be on the system PATH

and the GS_LIB

environment variable must be set up to point to the GhostScript libraries.

Note that GhostScript is not distributed with or required

by DigiFlow. Use of GhostScript is governed entirely by the licence of that

product and not by the DigiFlow Licence.

2.2.3

Additional memory

The maximum linear address range under 32 bit Windows is 32

bits or 4GB. By default under Windows this is subdivided into two ranges for

each process. The first 2GB of memory is for the process’s own use, while the

second is for the operating system. Although 2GB superficially appears a lot,

there are times when it would be useful to have more. (At the time Windows was

designed, 2GB was considered a good approximation to an unlimited memory

resource, but things have moved on…) With Windows XP and later it is possible

to change the 50:50 default split to reserve 3GB for processes, restricting the

system. Not all software, particularly some drivers, support this extension.

DigiFlow, however, is able to and so if you start running low on virtual

memory, it may be worth a try.

To install the 3GB process memory option, select System Properties

(right click on My Computer

and select Properties)

then the Advanced

tab. Click the Startup and

Recovery Settings button, then the Edit button to open NotePad to make the necessary changes.

Note that you need to have Administrative access rights to be able to do this.

Figure 3: Dialogs

for setting the /3GB

option to increase available virtual memory.

NotePad

will allow you to edit the boot.ini

file that controls the startup of Windows. Typically, this will look like

[boot loader]

timeout=30

default=multi(0)disk(0)rdisk(0)partition(1)\WINDOWS

[operating systems]

multi(0)disk(0)rdisk(0)partition(1)\WINDOWS="Microsoft

Windows XP Professional" /noexecute=optin /fastdetect

To enable the 3GB option, you need to add the /3GB switch to

the end of the line specifying Windows startup. It is best to do this by adding

an additional startup option so that you can boot your machine in either

standard 2GB or 3GB modes. The resulting boot.ini should look something like

this:

[boot loader]

timeout=30

default=multi(0)disk(0)rdisk(0)partition(1)\WINDOWS

[operating systems]

multi(0)disk(0)rdisk(0)partition(1)\WINDOWS="Microsoft

Windows XP Professional" /noexecute=optin /fastdetect

multi(0)disk(0)rdisk(0)partition(1)\WINDOWS="Microsoft

Windows XP Professional (3GB)" /noexecute=optin /fastdetect /3GB

Note that the order of these two lines determines

whether the default boot is 2GB or 3GB. In the above example, the standard 2GB

boot is the default. Reverse the order of the two lines to make the 3GB boot

the default.

2.3

Installation with framegrabber

If you are installing DigiFlow in a machine equipped with a

BitFlow R2, R3, R64 or R64e series framegrabber then some additional steps are

required. These require administrative access to implement.

2.3.1

Framegrabber installation

The framegrabber should be installed and tested using the

BitFlow installation procedure. You will require the BitFlow drivers for

version 5.00, 5.20 or 5.30. Later versions may also be supported (contact

Dalziel Research Partners for details). You should note that on some systems

the BitFlow installation procedure can hang; if this occurs, try installing

after rebooting Windows in Safe Mode.

The BitFlow framegrabber requires a configuration file (.cam, .rcl or .r64) for the camera

being used. Configuration files for cameras known to work with DigiFlow may be

found at http://www.dalzielresearch.com/digiflow/cameras/.

If you have a multi-user system where most users do not have

administrative access, we recommend that you change the permissions on the

BitFlow software to allow all users to change the camera configuration file if

and when they need to. This is achieved using the Registry Editor (regedit.exe;

accessible from the command prompt) to adjust the permissions on all keys in

the registry relating to ‘BitFlow’ by adding the ‘Authenticated Users’ security

principle with ‘Full control’. Failure to do this would mean that only users

with administrative access could change the camera configuration.

2.3.2

Camera configuration

DigiFlow requires information over and above what is

provided in the configuration file for the framegrabber card. This additional

information about the camera capabilities and users preferences is stored in DigiFlow_Cameras.dfc;

consult §13.3 for details of the format of this

file. Cameras not listed in this file have not been tested, although there is a

reasonable chance that all that is required (for a camera supported by the BitFlow

frame grabber) is the addition of appropriate entries, provided a suitable

camera configuration file is also available for the BitFlow framegrabber.

Please contact Dalziel Research Partners if you require any help or guidance

with this.

2.3.3

Local security policy

In the ‘Local security policy’ (found in the ‘Administrative

tools’ section of the ‘Control Panel’), open the ‘Local Policies: User Rights

Assignment’ option. You need to add permission for all DigiFlow users to the

following items:

·

Adjust memory quotas for a process

·

Increase scheduling priority

·

Lock pages in memory

It is suggested that you do this by giving full control

to ‘Authenticated users’

These adjustments are necessary to ensure that DigiFlow is

able to manage the machine performance adequately to ensure trouble-free

capture.

2.3.4

Video capture configuration

It is strongly recommended that video capture is to a disk

other than that containing the operating system in order to obtain adequate

performance. The necessary disk system bandwidth may be in excess of 240MB/s in

some cases (e.g. with a Dalsa 4M60 camera), thus requiring a Mode 0 RAID

array, or using Windows to ‘stripe’ across multiple disks. However, for most

cameras 40MB/s is sufficient and this may be achieved via a fast IDE or SATA disk

(but not the one the operating system is on!).

The capture process in DigiFlow can be configured in two

ways. Either you can directly specify the capture file and location each time

(risking the user specifying a disk system with insufficient bandwidth), or

setting up DigiFlow to capture to a fixed location and require the user to

‘review’ (and possibly edit) the sequence in order to copy it into their own

directory space. For multi-user systems, this second is generally preferred as

it allows users to utilise the capture facility like a video recorder while

preventing retention of unwanted video footage.

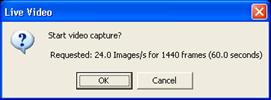

The default configuration takes the second option, and

assumes that the capture location is V:\Cache\CaptureVideo.dfm. We recommend that you

configure your system so that this directory exists (either by appropriate

naming of the capture disk, or by setting up a share to an appropriate point

and then connecting to it). This directory must not be compressed and must have

full access for all DigiFlow users. Once you have created this directory, you

should run File: Live Video:

Setup (see §5.1.5.3 for further

details) to create the initial V:\Cache\CaptureVideo.dfm.

It is strongly recommended that you do this before writing any other data to

the capture disk. Details on how to change the name or location of the

cache file may be found in 13.2.

It is important that the space

DigiFlow reserves in this file remains as a single contiguous block on the disk

drive. If it becomes fragmented for any reason then, due to the very high data

transfer rates required, DigiFlow may not be able to write to the disk as fast

as data becomes available from the camera and so timing errors may result.

Once created, V:\Cache\CaptureVideo.dfm

will be flagged as Read only

by the operating system (although DigiFlow will still be able to write to it).

The file will not shrink if a smaller sequence is captured, but may grow if one

larger than that specified during File: Live Video: Setup is requested (note that there is

a risk of fragmentation if this occurs). It is important, therefore, that you

go through the review process outlined in §5.1.5.2,

rather than simply copying this file, as in general only a part of the file

will contain valid data.

Consult §13.2 on DigiFlow_LocalData.dfc

should you wish to change the name or location of V:\Cache\CaptureVideo.dfm.

3 Basics

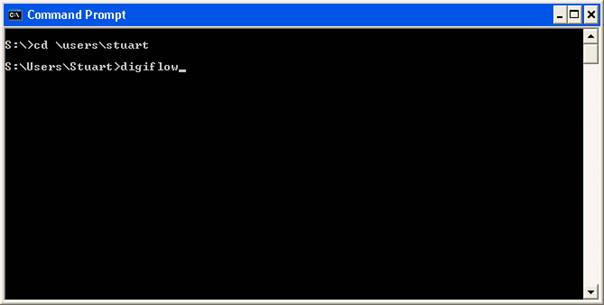

3.1 Starting DigiFlow

It is recommended that you use a new directory for each new

set of experiments and for each new project. In this way the DigiFlow strategy

of storing localised status files will facilitate use of DigiFlow in the

various different contexts. In such an environment it is frequently most

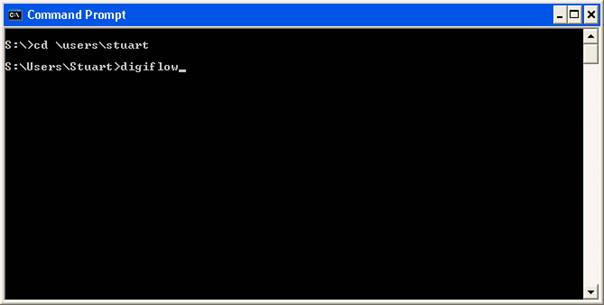

convenient to start DigiFlow from the command prompt (see figure 4) within the appropriate directory structure, although

other strategies such as multiple shortcuts or setting up associations for

Windows Explorer are also possible.

Figure 4:

It is frequently most convenient to start DigiFlow from the command prompt.

If you wish to run DigiFlow from a command prompt (strongly

recommended), it is worth putting this directory on the path so that DigiFlow

may be started by simply typing DigiFlow at the prompt, and placing a shortcut to the

command prompt on the topmost level of the Start button. It is also worth

pinning a shortcut to the command prompt to the start menu (and/or task bar for

Windows 7), and in the properties setting the Start in path to an appropriate

location.

If you are not familiar with the use of the command prompt,

then the following brief list of the most useful commands may be of some value.

|

Command

|

Description

|

|

cd folder

|

Changes to the directory (folder) located within the current

directory.

|

|

cd ..

|

Move up one directory level.

|

|

cd \

|

Move to the topmost (root) directory on the current drive

|

|

dir

|

List all files in the current directory.

|

|

dir *.dfi

|

List all .dfi

files in the current directory.

|

|

dir *.dfc

/s

|

List all dfc

files located either in the current directory, or any subdirectories.

|

|

move file dest

|

Move file to a new directory dest. Can also be used to rename folders.

|

|

copy srce dest

|

Copy the file srce to dest.

|

|

xcopy srce dest

|

A more flexible form of copy.

|

|

xcopy srce dest

/s/d

|

If srce and dest

are directories, then will copy all the files in the directory and any

subdirectories to dest, but only if the srce version is newer. This might be used, for example, as

xcopy

expt\*.dfd results /s/d

to update a collection of DigiFlow drawings (dfd files) in a

results folder.

|

|

ren file new

|

Rename file with the name new.

|

A very useful feature of Windows XP and later is that

the <tab>

key will expand a file name. For example, if you are in a directory that

contains subdirectories named Expt1,

Expt2 and Expt3, then typing cd e followed by <tab> will

expand this to cd Expt1.

Pressing <tab>

a second time will change this to cd Expt2, and so on. To find out more about the commands

available at the command prompt, then search for command prompt in the Window’s Help and

Support Centre and select Using

Command Prompt. Alternatively, if you know the name of the

command but want more details of its options, type the command followed by /? at the command

prompt (see figure 5 for an example).

Figure 5: Help is

available for a command at the command prompt by adding /? after the command.

If you prefer to start DigiFlow directly from the desktop or

start menu, you will need to create a shortcut at that point and set the Start in directory

appropriately (see figure 6). It is strongly

recommended that you do not normally run DigiFlow from the directory in which

the program resides.

Figure 6:

Properties dialog for a short cut to start DigiFlow.

DigiFlow supports a number of command line arguments. The

most common use is to specify either an image file or movie to be loaded when

DigiFlow starts, or a dfc

file to be run. In both these cases, simply type DigiFlow at a command prompt, followed

by the name of the image file/movie or dfc file.

Additionally, there are a number of command line switches

that can be used in special circumstances. These are given in the following

table.

|

Switch

|

Description

|

|

/?

|

Give the command line options for starting DigiFlow.

|

|

/allowautorun

|

Causes files named _autorun.dfc to be started automatically when they are

created in the current folder. Note that they will be deleted once they have

been run.

|

|

/archive

|

Turns on the .dfa

archive file generation system when not enabled by default.

|

|

/noarchive

|

Turns off the .dfa

archive file generation system.

|

|

/dfa

|

Identical to /archive.

|

|

/autopreprocess

|

Turns on the auto-preprocess mechanism (using .dfb filter files).

|

|

/noautopreprocess

|

Turns off the auto-preprocess mechanism (using .dfb filter files).

|

|

/bitflow:n

|

For machines with more than one BitFlow framegrabber

installed, specifies which board this instance of DigiFlow is to use.

Note: there should be no space between the colon and the number.

|

|

/camera:camerafile

|

Specifies that a particular camera configuration file should

be used rather than the default (specified through the BitFlow SysReg utility.

Note: there should be no space between the colon and the name of the camera

file.

|

|

/camerabuffer:option

|

Specifies the type of buffering to be used for the

capture file. One of: "(default)",

"buffer",

"nobuffer"

or "writethrough".

|

|

/con

|

Start up a conole window at the same time as starting

DigiFlow. (The console window can also be started from dfc code using open_console() or open_file().)

|

|

/debug

|

Turns on all logging options (to DigiFlow.log) for debug purposes.

|

|

/dev

|

Enable certain features related to internal performance

monitoring. Intended for use by the developer.

|

|

/disablewritequeue

|

Disable the threaded writing of images, thus forcing the

process generating the image to wait until the image has been written before

moving on to the next stage.

|

|

/enablewritequeue

|

Enable the threaded writing of images. This speeds up

processing by allowing the process generating the images to proceed to the

next image before the write is complete. Enabled by default.

|

|

/disableparallelwrite

|

Certain aspects of reading and writing images may have

problems if accessed simultaneously by multiple threads. Use of this flag

introduces mutexes (blocks of code where a thread has mutually exclusive

execution) to prevent simultaneous access. This has a detramental impact on

performance.

|

|

/enableparallelwrite

|

Certain aspects of reading and writing images may have

problems if accessed simultaneously by multiple threads. Use of this flag turns

off the mutexes (blocks of code where a thread has mutually exclusive

execution) to prevent simultaneous access. Allowing simultaneous access

improves performance.

|

|

/dpi:n

|

Tells DigiFlow to assume the display has n

pixels per inch. This is used to work out the size of some of the visual

elements in the user interface. Note that including DigiFlow.Options.Display.dpi and DigiFlow.Options.Display.Scaling

in DigiFlow_LocalData.dfc

sets the assumed pixels per inch and scaling (respectively) of the screen.

|

|

/stack:multiple

|

Sets the multiple for stack size allocation. If not specified,

thenthe default multiple is 1.0. This switch can be used in an attempt to

decrease or increase the size of the stack created for separate computational

threads in event of memory problems.

|

|

/timing

|

This turns on a performance timing feature incorporated in

some of the DigiFlow facilities.

|

|

/wine

|

Changes some features to improve performance when running

DigiFlow under Wine on Linux.

|

The final option for starting DigiFlow is to double-click in

Windows Explorer on a file associated with DigiFlow.

3.2 Main Window

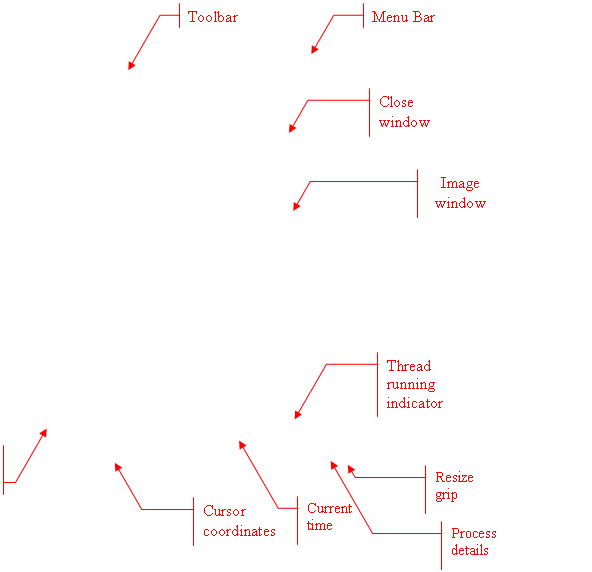

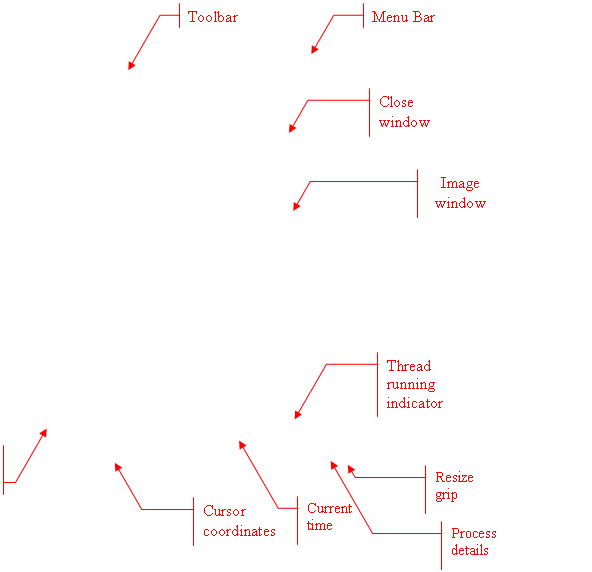

The main DigiFlow window follows that common for most

applications with a Multiple Document Interface (MDI). The menu bar at the top

provides access to the majority of the facilities, while the toolbar underneath

gives a more convenient method of accessing the more widely used functions. A

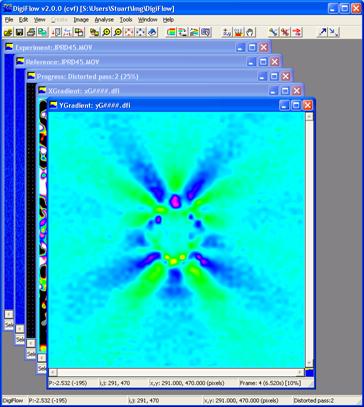

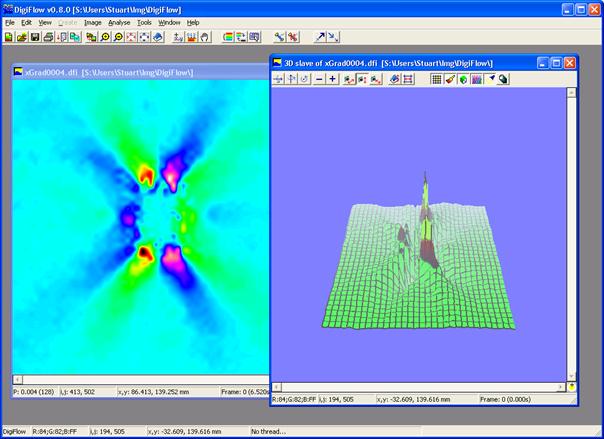

typical example is shown in figure 7.

Figure 7: The basic DigiFlow window.

As is normally the case for Windows applications, the main

window and the client windows may be resized by dragging the frame of the

window. Holding down the control key, while dragging the boundary of a client

window, will cause the contents of the window to be zoomed so as to make the

best use of the available space. If you do not hold down the control key, then

the window size is changed without changing the zoom applied to its contents.

3.3 Obtaining

help

As is common with most Windows applications, help while

using DigiFlow can be obtainined by pressing the f1 key. This will start an instance of

Internet Explorer and bring up an html copy of this manual. DigiFlow will

automatically scroll to the position within the manual that is most relevant to

the dialog or process you have open at the time. Subsequent presses of f1 will utilise the

same tab in Internet Explorer, provided this remains open.

Some users, particularly when first starting, may prefer to

have the manual automatically keeping track with their activities. This can be

achieved by turning on the AutoHelp facility, either from the Help menu, or by clicking

the questionmark button () on the toolbar. When activated, an instance of the

Internet Explorer browser will be activated and track your activity, providing

timely help.

As an option to using the html version of this manual, the

manual is also provided as DigiFlow.pdf.

3.4 Image Selectors

DigiFlow uses image selectors to specify image streams for

input to and output from a given process. Four types of image stream are

supported:

Single images. These contain just a single image.

Movie. A movie contains multiple images stored

in a single file.

Sequence. A sequence is a collection of related files, typically identified by a numeric part of the file name that

increases by one between neighbouring images in the sequence.

Collection. A collection is a group of image files that have no special relationship to each other. Collections may be

subdivided into two groups: homogeneous collections and heterogeneous

collections. In a homogeneous collection, all the images within the

collection have the same format (same size, colour depth, file type, etc.).

With a heterogeneous collection, the format may vary from one image to another.

At present, most processes within DigiFlow do not support heterogeneous collections.

Image selectors may specify not only raster format image

files, but also vector format files. DigiFlow supports many standard raster

formats, including .bmp,

.tif, .gif, .png, .jpg, and .avi along with

special formats to provide backward compatibility with DigImage (.pic and .mov, the latter now

renamed .dfm in

DigiFlow). DigiFlow also introduces the new DigiFlow Image format, .dfi, to allow images

to be saved with full floating point precision, and the DigiFlow Pixel format (.dfp) provides text

output specifically tailored for raster images.

Vector format files include Enhance Meta Files (.emf) Windows Meta

Files (.wmf) and

DigiFlow Drawing format (.dfd).

The last of these provides output formatted as plain text containing both data

and drawing commands. This text may be imported into other applications, or

read back into DigiFlow to reconstruct the image or drawing it represents. If

GhostScript is installed on the system (see GettingStarted.pdf), then Encapsulated

PostScript (.eps)

files can also be opened with DigiFlow.

DigiFlow also provides a specialised file format (.dft) for storing

particle tracking data. While these may be treated as images, in general the

functionality available through the specialised particle tracking facilities is

to be preferred.

The specialised DigiFlow and DigImage formats (.dfm, .dfi, .dfp, dft and .dfd) are described

more thoroughly in §11.2.

3.5 Archive files

The concept of an archive file was introduced to DigiFlow in

version 3.4 as a method of both collecting multiple image files that form part

of a sequence, and of storing additional details about an image or a sequence

of images when the file format being used does not provide a mechanism for

storing this information.

DigiFlow archive files use a .dfa extension appended on to the

corresponding image file or sequence name. For example, if a .dfa file is generated for a sequence of

images image000.png,

image001.png, … image732.png, then the

name of the .dfa

file will be image###.png.dfa.

If the use of archive files is enabled, then when reading

the image sequence back in as image###.png,

additional information not present in the .png files themselves (such as the

method used to construct the sequence and the timing of the sequence) will be

recovered from the .dfa

file. Alternatively, attempting to open image###.png.dfa will both read the

sequence image###.png

and recover the additional information from the .dfa file.

The use of DigiFlow archive files is enabled or disabled

through the Open Image

and Save Image

dialogs (see §4.1 and §4.2). For some builds of DigiFlow, .dfa generation is disabled by default.

Under these circumstances, .dfa

support may be turned on by including the switch /dfa on the command line.

For further details on the format of .dfa files, refer to §12.11.

3.6 Sifting

A key concept associated with input image streams is sifting.

In DigiFlow, sifting is the process by which images are extracted from in input

stream. The extraction process may result in all the images being extracted, or

only a subset of images (typically specified by a start number, an end number

and a step). It may also result in a subregion of the image (a rectangular window

within the image) being returned, or, in the image being modified to conform to

some reference. Further details of the sifting process are given in §4.3.

3.7 DigiFlow

Macros

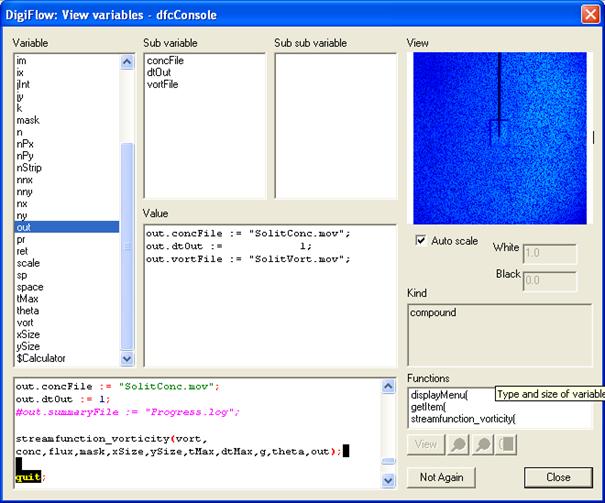

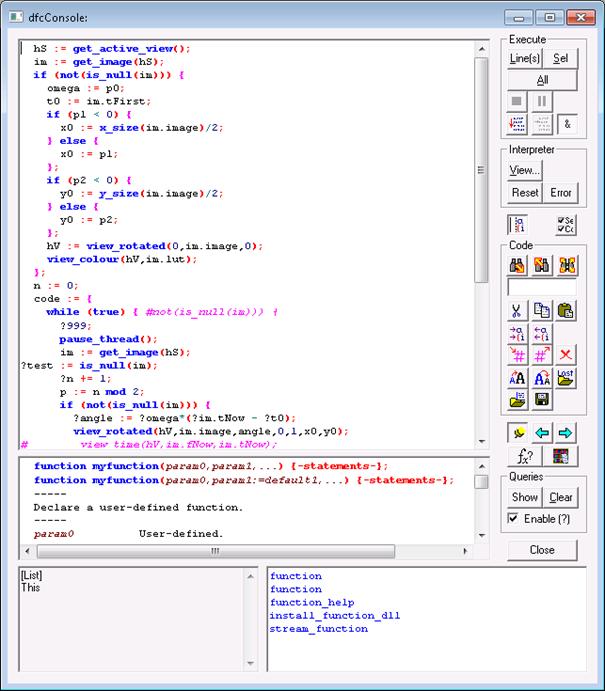

DigiFlow includes a powerful interpreter and associated

macro language. The language is referred to as dfc code. While the programming language

for dfc code is specific to DigiFlow, it follows the general syntax and conventions of many

other modern high-level languages. In addition to the basic functionality

expected of such languages, DigiFlow provides a vast range of functions

tailored specifically to tasks for which DigiFlow is ideal. This includes not

only image processing functions (ranging from contour tracing to Fast Fourier

Transforms), and data analysis functions (such as statistics, least squares

fits), to numerical solution of the equations of motion (e.g. Goudnov

solution of shallow water equations and stream function-vorticity formulation

for two-dimensional Boussinesq flows).

The present manual contains introductory documentation for

the use of dfc functions

and code. However, much of the detailed documentation for the individual dfc functions is to

be found in the interactive help system Help: dfc Functions. The most convenient

way of accessing this is frequently through the dfcConsole feature described in §5.2.10. The DigiFlow macros\ subdirectory (found in the

folder where DigiFlow is installed) contains a number of documented examples of

macro code.

3.8 Threads

One important aspect of DigiFlow is that it supports

not only multiple image windows, but also multiple processing threads. This has

two important benefits. First, it allows DigiFlow to continue to be used

interactively while it is processing simultaneously one or more sequence of

images, thus allowing real-time inspection of the progress. Second, for PCs

with multiple processors, the execution time of a single process can be greatly

reduced. (It should also be noted that more than one copy of DigiFlow may be

used simultaneously).

If the user attempts to close a window that is in

use with an active thread, then the system will warn the user that closing the

window will also kill the thread. Depending on the version of DigiFlow you are

using, windows that are playing a role in an active thread have the name of the

thread indicated in the window status bar at the bottom of the window and have

a ‘sloshing tank’ symbol

in the bottom right-hand corner.

in the bottom right-hand corner.

The user may also control the individual threads

more directly, stopping them, pausing or resuming them, or changing their

priority. This is achieved through the View Threads menu item (§5.3.10), or the corresponding  button on the main toolbar. (All

active process threads may be suspended by clicking

button on the main toolbar. (All

active process threads may be suspended by clicking  on the toolbar.)

on the toolbar.)

3.9 Text output

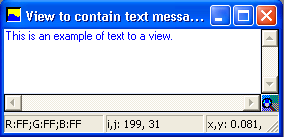

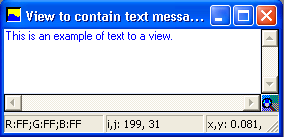

Some of DigiFlow’s features include graphical and text

output. In such cases it may be desirable to include more than simple plain

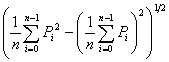

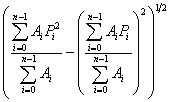

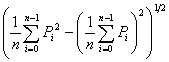

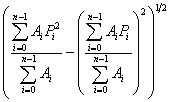

text. To achieve this, fully licenced copies of DigiFlow support LaTex-like

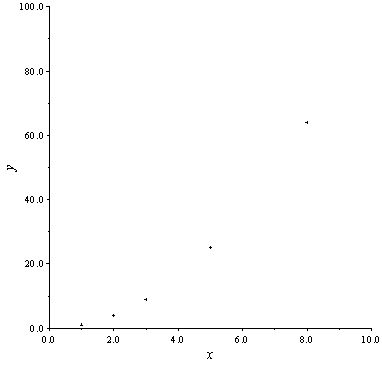

math-mode text formatting. For example, in Analyse: Time Series: Summarise (see §5.6.1.6) it is possible to specify the titles of the axes

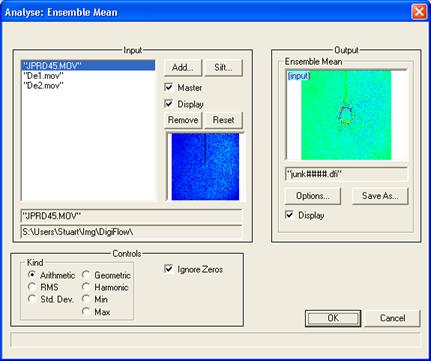

of the graph produced. Specifying it as the string "Dimensionless height

$\big(\frac{h}/{\alpha^2H_0}\big)$" would produce the label

.

.

Although DigiFlow does not understand the full range of

LaTeX commands and macros, it can interpret those most likely to be of use in

figures and graphs. The list includes:

Upper and lower case greek letters (e.g. \Alpha

or \zeta)

$

\!

\#

\$

\%

\&

\,

\2dots

\:

\;

\aleph

\angle

\approx

\backslash

\bar

\bf

\big

\BIG

\Big

\bigsizes

\bullet

\cdot

\circ

\copyright

\cos

\cosh

\dagger

\ddagger

\dddot

\ddot

\div

\dot

\dots

\downarrow

\Downarrow

\ell

\equiv

\euro

\exists

\exp

\footnotesize

\forall

\frac

\ge

\geq

\gg

\hat

\HUGE

\huge

\Im

\in

\infty

\int

\it

\langle

\LARGE

\large

\Large

\lbrace

\lbrack

\le

\left(

\left[

\Leftarrow

\leftarrow

\Leftrightarrow

\leftrightarrow

\leq

\ll

\ln

\log

\mathbf

\mathit

\mathrm

\minus

\mp

\nabla

\ne

\neq

\normalsize

\notin

\oplus

\oslash

\otimes

\overchar

\P

\partial

\phantom

\pm

\pounds

\prime

\prod

\propto

\qquad

\quad

\rangle

\rbrace

\rbrack

\Re

\right)

\right]

\rightarrow

\Rightarrow

\S

\scriptsize

\sim

\simeq

\sin

\sinh

\small

\sqrt

\subset

\subseteq

\sum

\supset

\supseteq

\surd

\tan

\tanh

\textbf

\textit

\textnormal

\textrm

\therefore

\tilde

\times

\tiny

\underline

\Uparrow

\uparrow

\wedge

\wp

\yen

\\

\^

\_

\{

\}

\~

Thereare some minor restrictions and additional

requirements for the DigiFlow LaTeX-like syntax compared with standard LaTeX. For

example, the standard LaTeX $\left(\frac{a}{b}\right)$ should be stated in DigiFlow

as $\left({\frac{a}{b}}\right)$.

The additional pair of braces tells DigiFlow that the fraction a/b

is controlling the size of the large left bracket. This additional pair of

braces does not affect the processing of the string by LaTeX. A further example

is that DigiFlow accepts LaTeX macros such as \alpha whether or not it is in ‘maths

mode’ (i.e. between $..$).

This LaTeX-like text formatting (available only with fully licensed

copies of DigiFlow) may also be used in dfc code, for example through the draw_text(..), draw_axes(..) and plot_titles(..) commands.

It is not possible to define additional LaTeX-like macros

from within LaTeX-like formatted text. However, additional macros may be

defined from within dfc

code; see §11.4 and the dfc help for further details.

A powerful feature of this component of DigiFlow is the way

it works to support the use of Encapsulated PostScript (.eps) files in LaTeX through the psfrag

macro package. See §5.1.12 for further details.

4 Common dialogs

4.1 Open Image

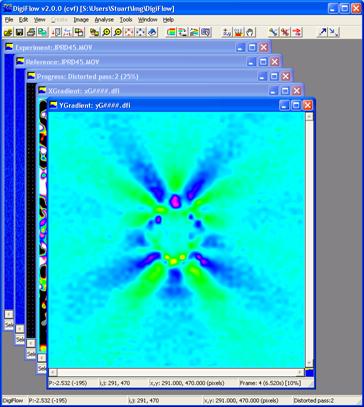

The Open Image dialog box is used throughout DigiFlow to

open source image selectors (§3.1).

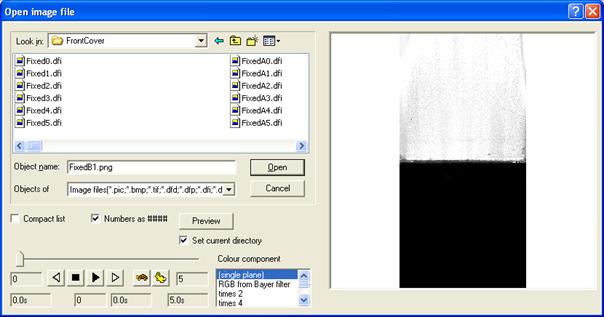

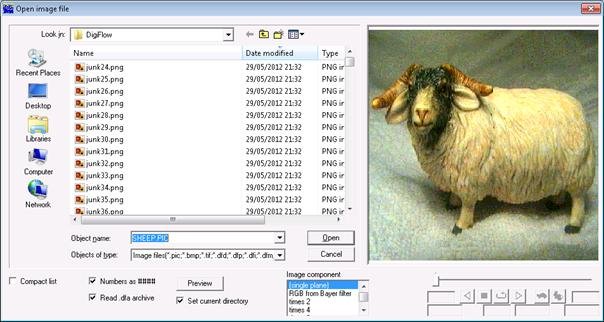

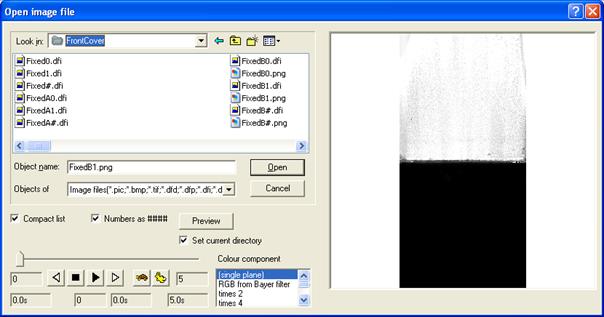

(a)

(b)

Figure 8: The Open Image

dialog box under Windows XP. (a) Showing all files and (b) using Compact List

option.

The Open Image dialog box consists of a standard

Explorer-style display of folders, files, file types, etc., along with a

preview pane on the right-hand side. This preview pane will attempt to display

the currently selected file.

DigiFlow supports a range of industry standard image

formats, plus some special formats. The special formats both provide

compatibility with the earlier DigImage system, and provide facilities (e.g.

floating point data representation) not found in industry-standard formats.

These non-standard formats are described in more detail in §11.2 (DigiFlow drawing format) and §12

(DigiFlow image file formats). Note that DigiFlow expects the user to specify

the extension of the file. It is therefore important that all extensions are

visible in the dialog (refer to §2.2 for how to

achieve this).

To select a single image or a movie, simply click on the

name of the file containing this object. If you prefer, the name of the file

may be typed at the File name

prompt. If you type in the file name a preview will not be generated

automatically, but can be requested by clicking the Preview button. If manually entering the

file name, then it is important that you specify the file extension to remove

any potential ambiguity.

To select a sequence, the name of the sequence must be typed

at the File name

prompt, using hashes (#) to indicate the varying numeric part of the file name.

Alternatively, click on any member of the sequence and check the Numbers as #### box.

This will convert (starting from the right-hand end of the file name) any

digits found into the appropriate number of hash characters, thus allowing easy

specification of the sequence. However, numbers enclosed in parentheses or

square brackets (i.e. (..)

or [..]) will

not be converted to hashes. This allows numeric data to be included

unambiguously in the file name. Again, the Preview button may be used to generate a

preview if it is not generated automatically.

The precise behaviour of the Compact list check box depends on which

version of Windows you are using. The motivation, however, is to provide a more

compact way of accessing a large number of numbered images in a given folder.

Under Windows XP, the Compact

list check box will provide a more compact summary of those

present by displaying the name of the first few in a given sequence, and using

the compact hash notation to summarise the rest. An example of this is given in

figure 8, where figure 8a shows all the files

(without Compact list

checked) and figure 8b shows how the number of files visible is decreased and

sequences are replaced by hashes in the file name when the Compact list box is checked.. Selecting

the summary containing hashes is equivalent to selecting the entire series.

(Note that clicking on Compact

list will retain the files specified at the Object name prompt, but remove any

selection in the view window.)

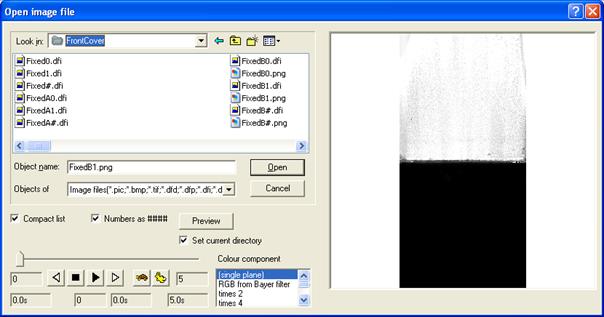

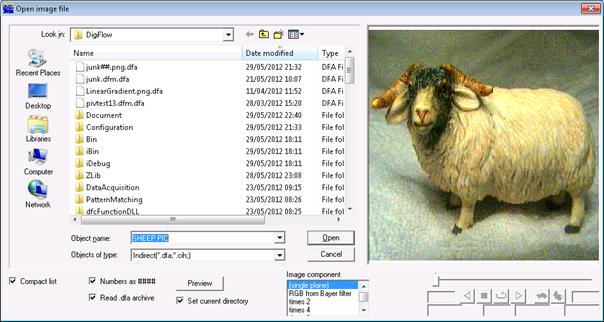

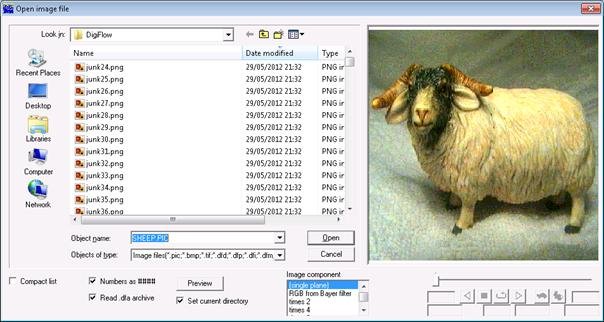

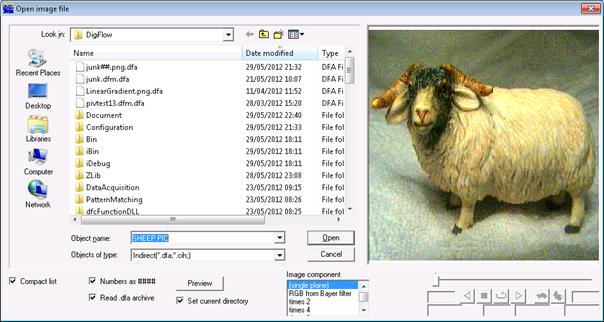

Unfortunately, this simple Compact list option is not available

under Windows Vista or Windows 7. Instead, checking Compact list searches for .dfa DigiFlow archive

files (see §3.5) and displays only them. Provided all the images and sequences

have been created with the archive facility enabled, then the net effect is

very similar. An example of this is shown in figure 9.

Whichever version of Windows you are using, if you select an

image file (rather than a .dfa

file) then the .dfa

file is read only if the Read

.dfa archive box is checked. However, if you select the .dfa file itself, then

it will always be read, along with the image or sequence of images.

(a)

(b)

Figure 9: The Open Image dialog box under Windows 7. (a)

Showing all files and (b) using Compact List option. Note that only thos files

for which a .dfa

archive file was generated will be shown when the Compact

list box is checked.

Note that the default settings of the Number as ####, Compact list and Read .dfa archive check boxes is

remembered from one invocation of the dialog to the next.

A collection of images may be specified using the mouse in

combination with the <shift>

key to select a range of files, or the ctrl keys to select or deselect

individual files. Alternatively, the names may be typed at the File name prompt,

each name enclosed by double quotation marks. The collection is sorted into

alphabetical order for display and processing. (If a collection is specified in

this manner then any hash characters will be interpreted as hashes. Similarly,

checking Number as ####

will be ignored.) In general, a sequence is preferable to a collection as it

offers a greater level of control.

A collection of images may also be selected using wildcards.

This may be achieved in two ways. If you use the standard Windows wild cards (? to represent a

single character, and *

to represent a variable number of characters) then the dialog will display only

those files that fit the description; you may then select them in the normal

manner. Alternatively, you may use % in place of ? and $

in place of *

to do the selection directly. For example, typing Sheep*.* will cause the dialog to

display sheep2.tif,

sheep.bmp, sheep.jpg, sheep.pic and sheep.tif to be

displayed in the dialog box, which may then be selected using the mouse and

shift key. Alternatively, Sheep$.$

will achieve the same result, selecting all five files.

If the selected image contains true colour, then the Colour component

list box is enabled. This list box allows selection of whether the image is to

be treated as full colour, or how the colour information is converted to a

greyscale for processing by DigiFlow. For example, selecting RGB will allow

DigiFlow to process the red, green and blue image planes separately (where this

makes sense), while green

will take the green component of the colour image and treat it as a greyscale

image, or hue

will process the colour using a hue/saturation/intensity representation of the

image. The options greyscale

and mean all

produce a similar effect, although precise details of how the resulting image

is constructed from the red, green and blue components differs. The table below

gives the relationships.

|

Key

|

Returns

|

Comments

|

|

RGB

|

Three colour planes

|

Full colour image

|

|

Mono

|

0.11*red + 0.59*green + 0.30*blue

|

Same as grey.

|

|

Red

|

red

|

Red component only.

|

|

Green

|

green

|

Green component only.

|

|

Blue

|

blue

|

Blue component only.

|

|

hue

|

|

Image hue (colour)

|

|

saturation

|

|

Image saturation (purity)

|

|

intensity

|

|

Image intensity (brightness)

|

|

cyan

|

1 – red

|

|

|

magenta

|

1 – green

|

|

|

yellow

|

1 – blue

|

|

|

grey

|

0.11*red + 0.59*green + 0.30*blue

|

Same as mono.

|

|

mean

|

(red + green + blue)/3

|

Mean of three components.

|

|

max

|

max(red, green, blue)

|

The brightest component.

|

|

min

|

min(red, green, blue)

|

The darkest component.

|

An image containing only a single plane of data may contain

colour information if captured from a camera fitted with a Bayer colour mosaic

filter. To provide support for this and since it is unlikely that the image

file will contain information that DigiFlow can use to automatically detect

such an image, when DigiFlow detects a single plane of data in the image to be

opened it provides the following supporting options:

|

Key

|

Returns

|

Comments

|

|

(single

plane)

|

P

|

Standard image

|

|

RGB from

Bayer filter

|

Three colour planes

|

Interpret as a full colour image using a standard Bayer filter

layout

|

|

times 2

|

2*P

|

Intensities rescaled

|

|

times 4

|

4*P

|

Intensities rescaled

|

|

times 8

|

8*P

|

Intensities rescaled

|

|

times 16

|

16*P

|

Intensities rescaled

|

|

times 32

|

32*P

|

Intensities rescaled

|

|

times 64

|

64*P

|

Intensities rescaled

|

|

div 2

|

P/2

|

Intensities rescaled

|

|

div 4

|

P/4

|

Intensities rescaled

|

|

div 8

|

P/8

|

Intensities rescaled

|

|

div 16

|

P/16

|

Intensities rescaled

|

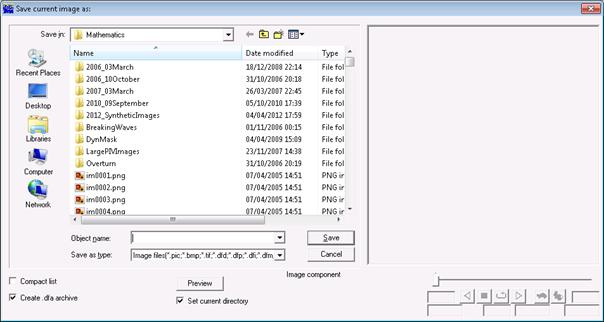

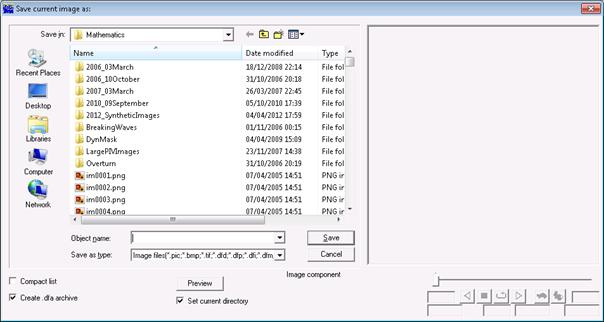

4.2 Save Image As

The Save

Image As dialog is essentially the same as the Open Image dialog

(§4.1), but is produced when the name of the output

image selector (§3.1) is required.

Figure 10: The Save Image

As dialog box.

If an image selector of the same name does not exist

already, then the file name must be entered by typing at the File name prompt. The

extension to be used should be specified explicitly as DigiFlow uses this to

determine the file type to be created. It is therefore important that all

extensions are visible in the dialog (refer to §2.2

for how to achieve this). Simply selecting a type from the Save as type list will not necessarily

have the desired effect if more than one possible type is indicated.

Note that some file types have a range of options such as

bit depth and compression. These are normally controlled from outside the Save Image As dialog

box using the Options…

button in the parent dialog. Refer to §4.4 for

further details.

DigiFlow supports a range of industry standard image

formats, plus some special formats. The special formats both provide

compatibility with the earlier DigImage system, and provide facilities (e.g.

floating point data representation) not found in industry-standard formats.

These non-standard formats are described in more detail in §11.2 (DigiFlow drawing format) and §12

(DigiFlow image file formats).

The Compact

list check box operates in the same way as for the Open Image dialog

described in §4.1. Here, the Create

.dfa archive check box replaces the Read .dfa archive and causes DigiFlow to

create a .dfa

archive for the output it produces (see §3.5).

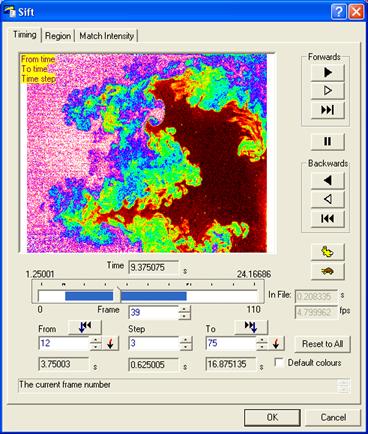

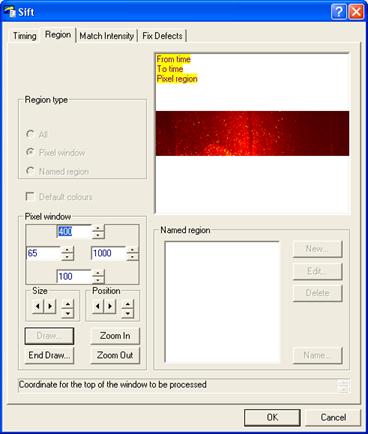

4.3 Sifting input streams

When processing an image stream it is often desirable to

select only a subset of the stream for processing. This subset may contain only

some of the images from the stream, and/or it may contain only part of each

image. Within DigiFlow this process of selecting a specific part of an image

stream for processing is referred to as ‘sifting’. When sifting is available,

the corresponding dialog will have a Sift… button (typically one for each input selector) that

starts a tabbed dialog box controlling the sifting process. The following

subsections describe the various sifting options.

4.3.1 Selector

timing

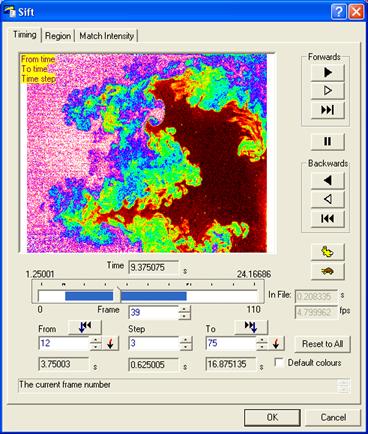

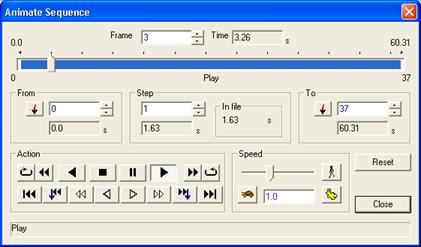

The Selector Timing tab of the Sift dialog allows the user

to specify which times from a multi-image image selector (§3.4) will be used for a process.

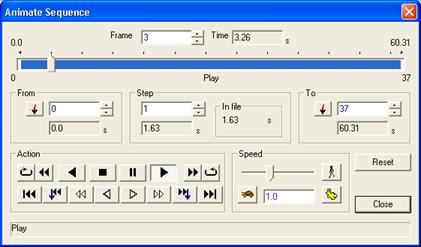

Figure 11: The standard

Selector Timing tab of the Sift dialog.

This tab allows the preview of the image selector and

specification of the processing start and end points as well as the step

between the images to be processed.

The buttons down the right-hand side allow the image

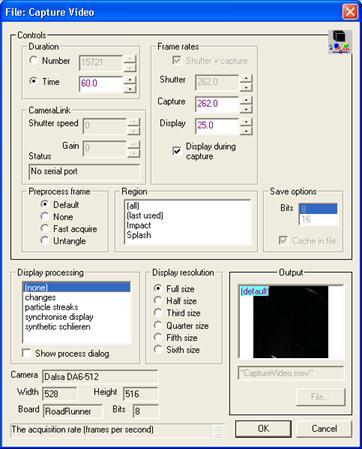

selector to be played, the speed of this preview controlled by the hare and

tortoise buttons. The slider allows the currently visible frame to be dragged

to any time. The Frame

edit box and spin control allow more precise movement of the preview frame. The

and

and  buttons move to the currently

specified limits for the processing.

buttons move to the currently

specified limits for the processing.

The frame numbers for the start and end points may be typed

in the From and

To edit boxes,

and the spacing in the Step

edit box. The corresponding time boxes below will be updated automatically.

Clicking the  buttons adjacent to the From or To edit boxes will set the corresponding

from or to position to the current position, shown by the slider and the edit

boxes immediately above (time) and below (frame).

buttons adjacent to the From or To edit boxes will set the corresponding

from or to position to the current position, shown by the slider and the edit

boxes immediately above (time) and below (frame).

Alternatively (but less precisely), holding <shift> while

dragging the slider will allow specification of the timings.

When the From

and To times

are set, or Step

is not unity, then this information is displayed on a yellow background at the

top of the image preview.

For files that do not store timing information, the DigiFlow

assumes by default that the files are separated in time by one second. This may

be changed using In file,

in which the image spacing may be specified in either seconds or, using the

lower of the two controls, in frames per second. These two controls are

disabled for files that store time information, but display the relevant

details.

Reset to

All resets the start and end points to include the entire

selector.

Checking the Default

colours control will cause the preview image to be displayed

using the DigiFlow default colour scheme rather than the colour scheme stored

in the image file.

4.3.2 Selector

region

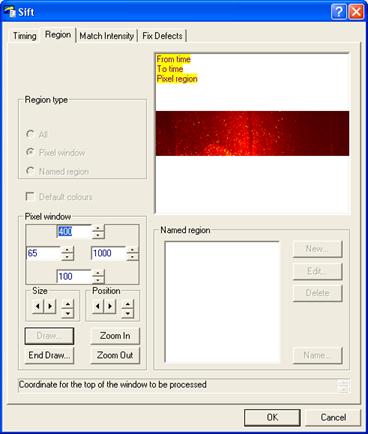

The Selector Region tab of the Sift dialog allows the user

to specify a region within an image selector (§3.4)

that will be used for a process.

Figure 12: The standard

Selector Region tab of the Sift dialog.

For a process requiring more than one input stream (and

hence having more than one image selector in its dialog box), one of the

streams (typically the first in the dialog box) will be the master

stream. If the region for this stream is changed, then the region for the other

(slave) streams will be changed automatically to conform to (typically

made the same as) that for the master stream. It remains possible, however, to

change independently the region for the slave selectors, provided the size of

the region for the slave selector is compatible with that for the master

selector.

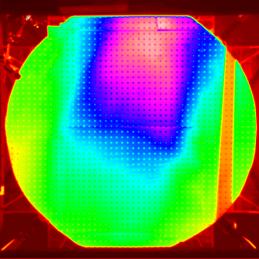

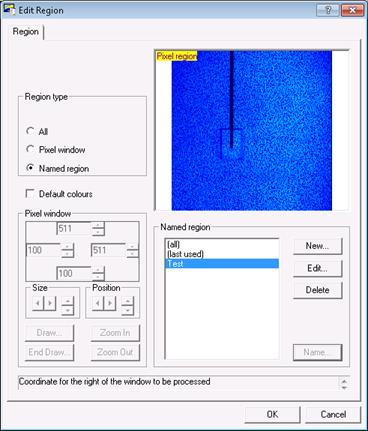

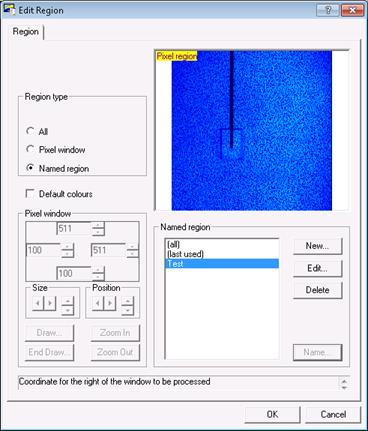

The type of region is selected by the Region type group of radio buttons. The

example shown in figure 12 is for a master

selector; the Conform

option is not available here, but would be visible above All when sifting slave selectors.

If Pixel

window is selected, the pixel coordinates of the left, right, top

and bottom of the window may be specified in the edit controls within the black

rectangle. If preferred, the size may be increased without shifting the centre

of the region, or the location of the region may be changed without adjusting

the size, using the Size

and Position

controls, respectively.

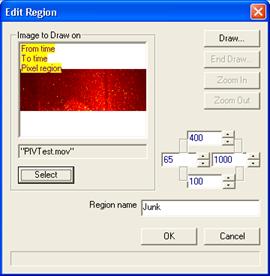

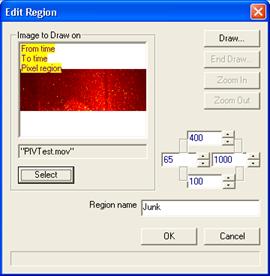

Alternatively, clicking the Draw button opens a full size window

that allows the window to be moved and resized dynamically using the mouse (see

figure 13). (Hint: it is sometimes worth dragging a

corner of the window to increase its size and thus make it easier to grab the

edge of the region window.) The Zoom

In and Zoom Out

buttons may be used to control the magnification while drawing. Similarly, you

may swap between this window and the Sift dialog box to use the various edit

and spin controls to move the region around. Click on the End Draw… button to close the drawing

window and re-enable the other controls on the Sift dialog.

Figure 13: Drawing a

region.

If Named

region is selected, then previously saved regions are displayed

and may be selected. This provides a convenient method of using the same region

in a range of different processes. The four buttons to the right of the list

box may be used to manage these named regions. New named regions may be created

either by clicking the New

button, in which case a subdialog is produced to allow specification of the

region, or by clicking the Name

button (when Pixel window

is selected) to give a name to a pixel window. The Edit button allows alteration of an

existing window, while Delete

removes the region from the list. Note that selecting a named region that is a

Pixel window will update the controls in the Pixel window group. Switching back to Pixel window allows

editing of these values, while Name

may be used to overwrite the old values with the new ones, or to create a copy.

Figure 14: Editing a

region.

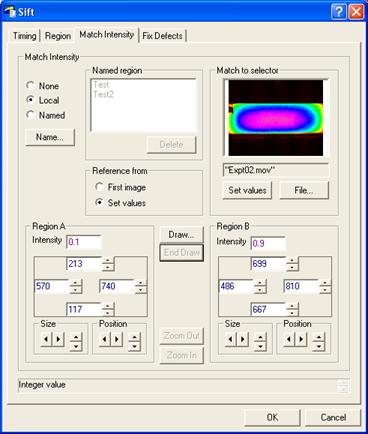

4.3.3

Matching intensities

Quantitative measurements often require that the intensities

are matched between different frames and sequences. The intensities of the raw

image streams may fluctuate due to a number of reasons. One common one is the

mismatch in frequencies between the illumination and the camera frame rate.

Depending on the type of light source and the shutter speed of the camera, this

mismatch may lead to a modulation of nearly 50% of the signal amplitude, while

automatic gain features can lead to similar results. While it is in general

best to avoid these problems by using continuous or high frequency light sources,

this is not always practical.

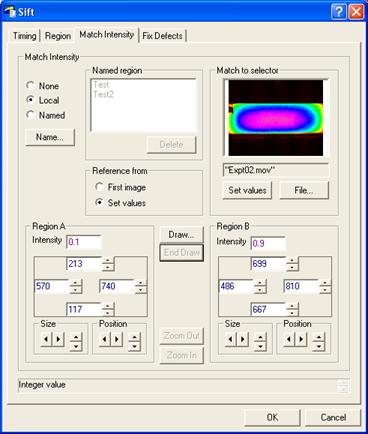

The Match

Intensity tab in the Sift dialog (figure 15)

provides a basic mechanism for correcting the intensities of input image

streams to match them to some fixed reference. The basic strategy is for the

image to contain two reference regions that contain approximately uniform

intensities that should not change with time. These two regions are then used

to generate a linear mapping between the input image and a reference intensity,

thereby adjusting the intensities in preparation for processing.

Figure 15: The

Match Intensities tab provides the ability to directly relate an image to

reference values.

The Match Intensity facility is turned on and off using the

radio button group in the top-left; .when off (None), then the intensities are read

without alteration. The Match Intensity facility can be enabled either using

details provided locally (Local),

or with details saved previously (Named), in a similar manner to that used for Regions.

A locally defined Match Intensity reference consists of a

pair of rectangular regions, Region

A and Region B.

The location and size of these regions is controlled by a variety of controls

for specifying the left, right, top and bottom of each of the rectangles.

Additionally, as with the Regions dialog, the regions may be drawn on an image

and dragged to their desired location by clicking the Draw… button (see figure 16).

Each region requires an intensity to be associated with it.

When Reference from

is set to Values,

then the Intensity

controls in the Region A

and Region B

groups is enabled. The user may directly enter the desired (target) reference

here, or by using File

in Match to selector

to select a suitable image, then the Match button will read the intensities from the specified

image. Alternatively, if Reference

from is set to First

image, then the reference intensities are not entered at this

point, but rather they are determined automatically from the first image in the

stream to be processed.

Once the various controls for a Local Match Intensity have been set,

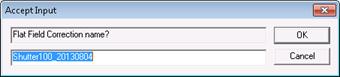

their values may be saved for use elsewhere by clicking Name…. This prompts for a user-supplied

descriptive name, saves the settings, and switches the dialog into Named mode.

Selecting an entry from Named matches loads the corresponding

settings for use. If you wish to alter the settings of a saved match, load it

by selecting from the list, then switch to Local mode. Make any necessary changes,

then click again on Name

to name and save it (you may re-use an existing name).

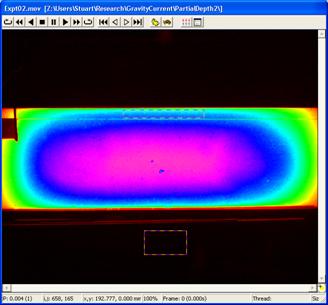

Figure 16:

Drawing regions for intensity matching.

4.4 Modifying output streams

This section describes the various modifications that may be

made to the output streams. These modifications are accessed via the Options… button in

the output stream select group. The precise contents of this dialog will vary

depending on the output file type that has been selected.

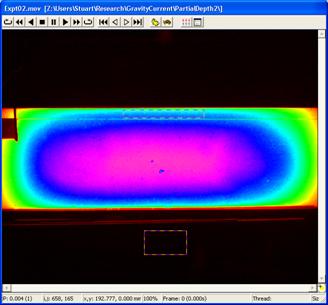

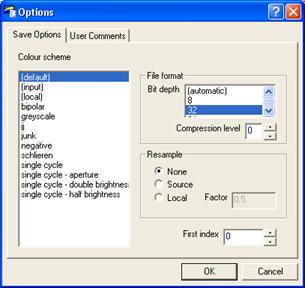

Figure 17: The Save Options

dialog.

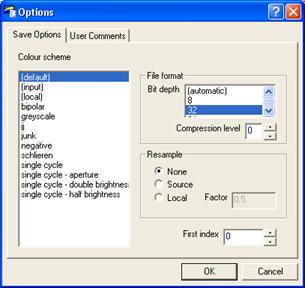

4.4.1

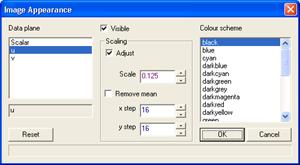

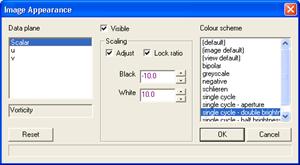

Setting output stream colour

The colour scheme for the output stream is selected from the

list of known colour schemes in the Colour scheme list box. Selecting the (input) member will set the colour

scheme to be the same as for the master input stream.

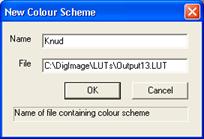

If you wish to add a new colour scheme or modify an existing

scheme, you must use the View:

Colour Scheme… menu option. Refer to §5.3.6

for further details.

4.4.2 Full colour

For output formats such as .bmp, .png or .jpg that support true colour images, a Full colour checkbox

is produced. If checked, then the output is saved as in a 24 bit true colour

format. If not checked, then a greyscale version of the output is saved, along

with the selected false colour map (the false colour map is not saved for .jpg files).

4.4.3 File format

The File

format group invokes various options that may exist for the

specified file type. The contents of this group will depend on the file type

specified: in many cases there are no options and so the group is left empty.

The Bit

depth field determines the number of significant bits saved for

each pixel in the image. Most image formats use 8 bits, but for high resolution

images, or images that result from numerical computations, a greater depth may

be desired. If the .dfi

format is specified for the file type, then bit depths of 8, 32 and 64 bits are

possible.

When available, the Compression level edit and spin control

will determine whether or not the image is to be compressed using a lossless

compression. A value of zero indicates no compression, with positive integers

giving various levels of compression. Typically compressing an image reduces

its size by around a factor of two, but at the cost of slower access (although

for a very slow hard disk the access speed may improve with compression). The

additional time taken to compress an image will depend in part on the level of

compression requested, and in part on the structure of the image. If a process

seems particularly slow, but still producing the correct answer, try reducing

the level of compression.

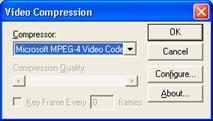

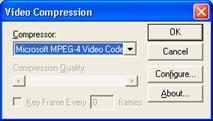

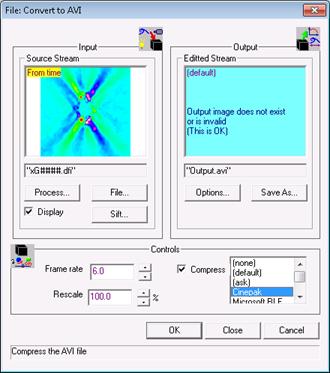

In the case of an .avi file, selecting zero causes full, uncompressed

images to be saved, whereas setting Compression level to 1 will use the Cinepak compression

(installed by default with Windows). For other compressions specify a value of

2 for Compression level

which will then cause the standard Windows Video Compression dialog to be produced

when DigiFlow is ready to save the first frame of the output stream. (Note that

most of the .avi

compression options are ‘lossy’ in the sense that only an approximation to each

image is saved.)

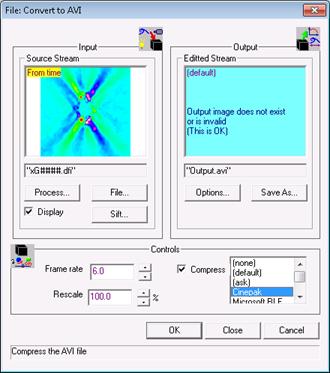

Figure 18:

Standard Windows Video Compression dialog.

For .jpg

images, the compression applied is ‘lossy’. The higher the level of

compression, the greater the fraction of information lost. This is controlled

by the Quality

control. Note that in general the lossy nature of the compression in .jpg images means that

they should not be used for the storage of intermediate results.

4.4.4 First index

By default, the first image in a sequence produced by

DigiFlow will be given a zero index (numerical part of the file name). The First index control

may be used to change the index for this first image. In either case,

subsequent images will always be produced with unit increments from this value.

4.4.5 Resampling

When the .dfi

image format is selected, it is possible to rescale the output stream before it

is saved and then reverse this rescaling when the image is subsequently read

in. Typically this option is used to reduce the resolution of the saved image,

but maintain its size by interpolating back to the original size before using

the image again.

This feature is enabled using the Resample check box. When enabled, the

resolution of the saved image is controlled by the Factor edit control which accepts a floating

point value for the relative resolution of the saved image. For example, a

value of 0.5

will cause the saved image to have only ¼ of the number of pixels of the

original in the file, but through interpolation the missing pixels are

reconstructed when the image is read in again. This option is particularly

valuable for use with images produced by the synthetic schlieren (§5.6.4.3) and PIV (§5.6.5.2)

facilities.

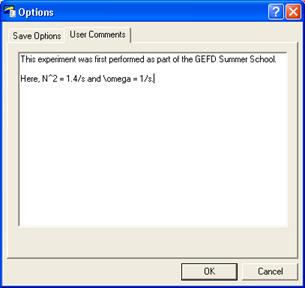

4.4.6 Save

user comments

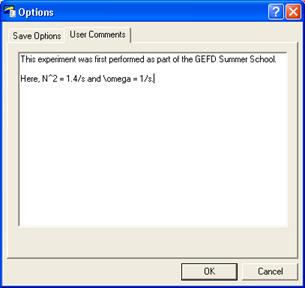

Figure 19:

User comments tab.

Some file formats (e.g. .dfi, .dfd and .dft) allow user comments to be saved

along with the images. These comments are specified using the User Comments tab of

the Save Options dialog.

4.4.7

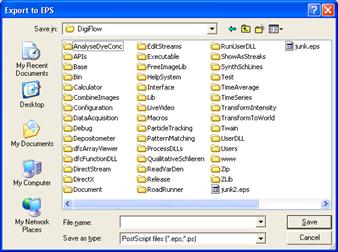

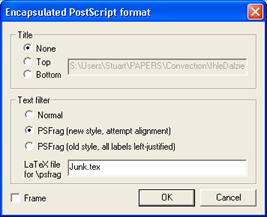

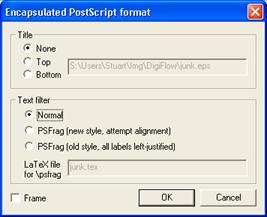

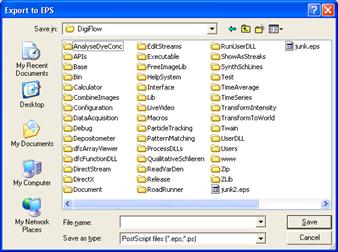

Encapsulated PostScript streams

DigiFlow can produce Encapsulated PostScript (.eps) output either

using the Export to EPS

option in the File

menu (see §5.1.12) or by specifying an .eps file as the

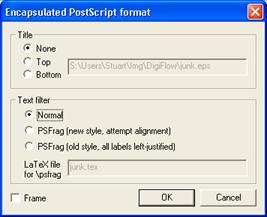

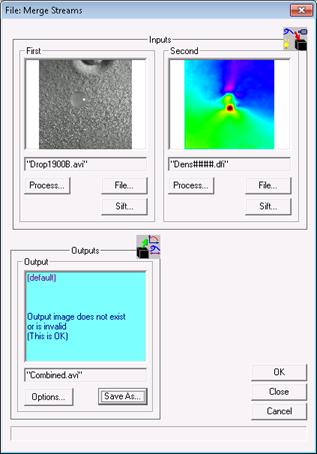

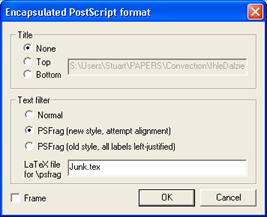

output stream. In the latter case the normal Options dialog has an additional EPS button that

invokes the dialog shown in figure 20.

Figure 20: The

output options for Encapsulated PostScript (.eps) files.

The PostScript options provide the ability to add a title

either above (Top)

or below (Bottom)

the image or graphic output, and to add a frame (Frame) around the output. DigiFlow (commercial

version only) provides support for the LaTeX \psfrag macro package. This

enables the text produced by DigiFlow to be readily replaced with text

generated by LaTeX, thus keeping font and style information consistenet and

allowing post-plotting adjustment of the text labels, etc. Selecting Normal produces the eps

containing the original labels, whereas with either of the PSFrag options the text is replaced by a

unique character for each element. At the same time, DigiFlow creates a .tex file that

contains the mapping between these characters and the original text. This .tex file can then be

embedded in included in the main LaTeX document to reproduce the figure. See §5.1.12 for further information on the Encapsulated

PostScript formatting options.

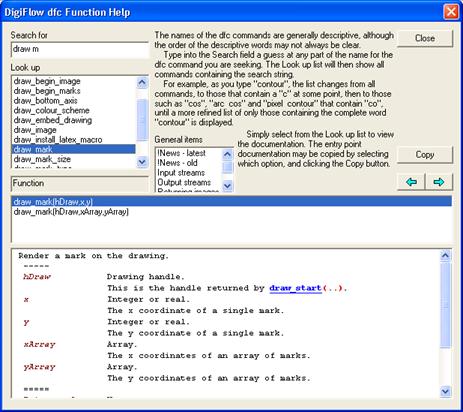

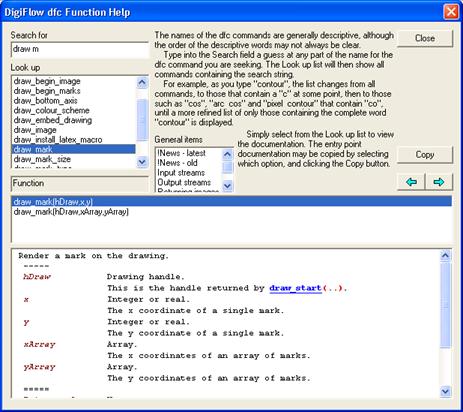

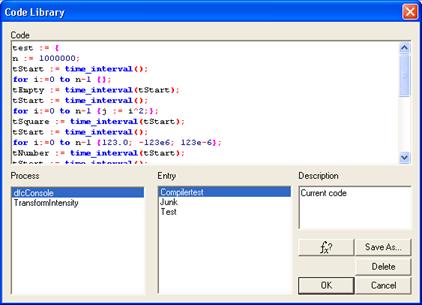

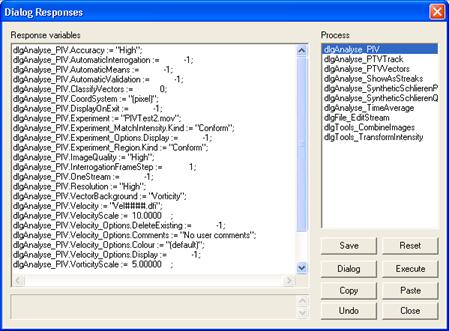

4.5 dfc Help

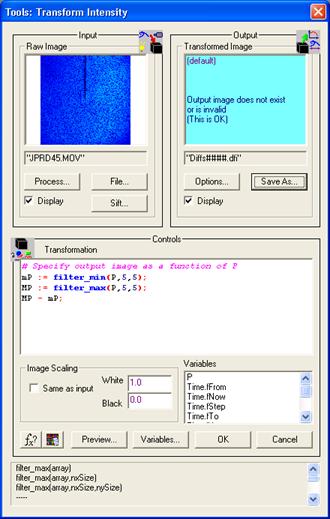

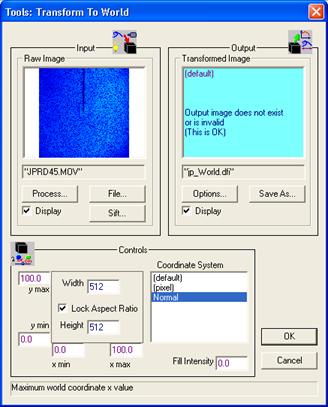

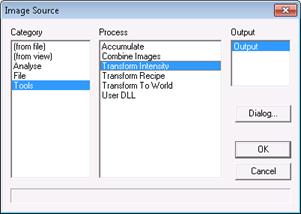

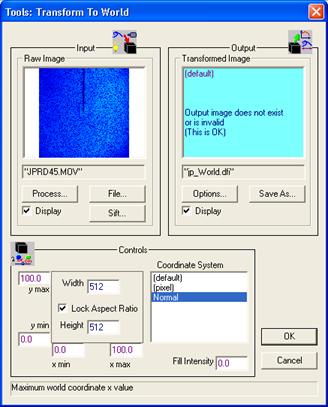

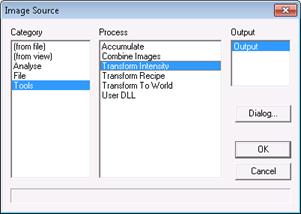

As will be seen in §5, a large

part of DigiFlow’s power and flexibility is gained by the use of user-supplied

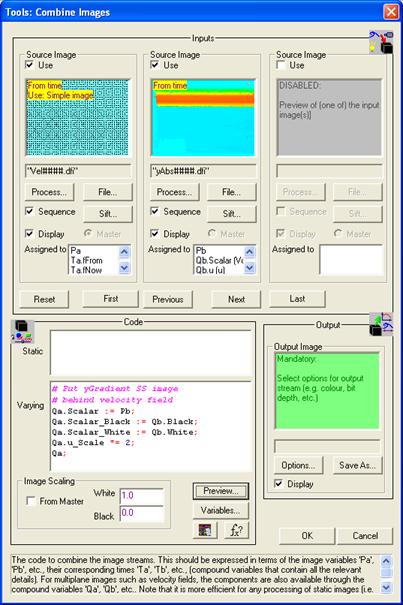

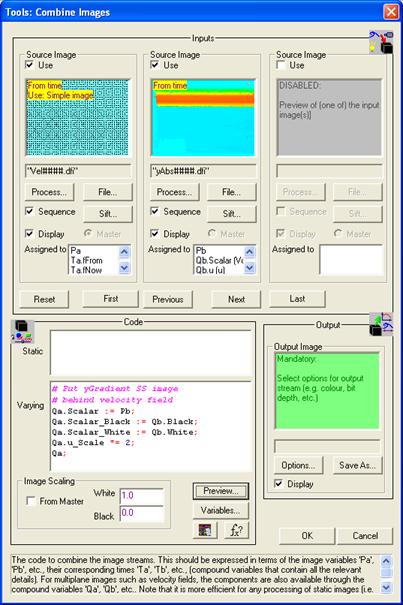

macro code. This code is known as dfc code. Examples of facilities that require such code

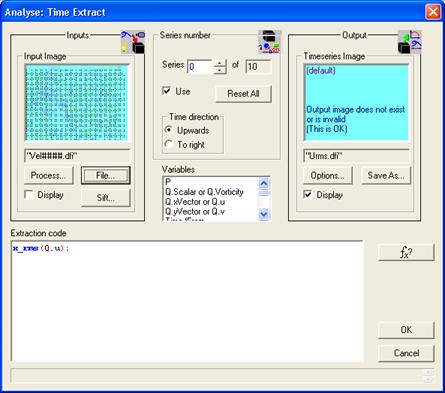

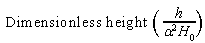

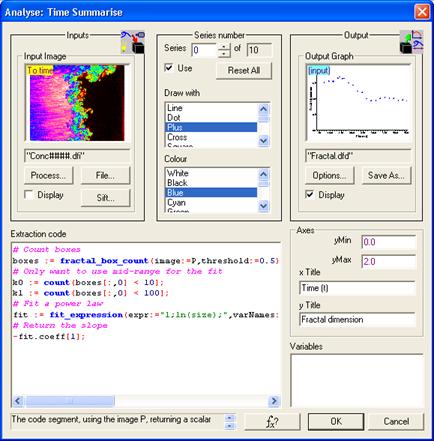

include Analyse: Time:

Extract (§5.6.1.5), Analyse: Time: Summarise

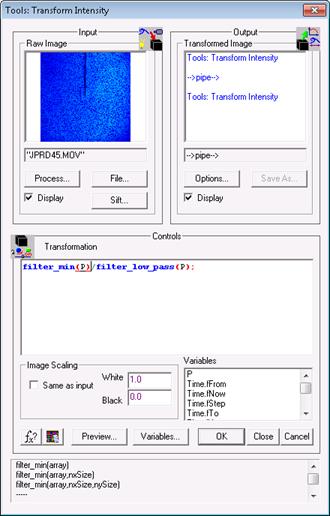

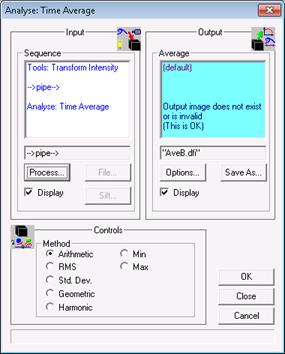

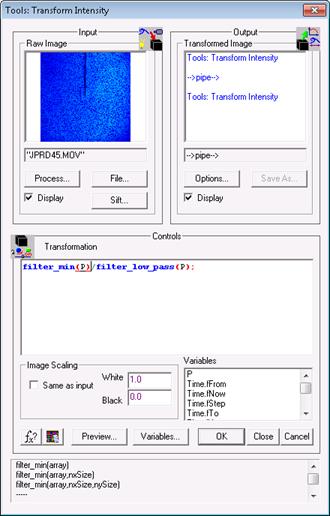

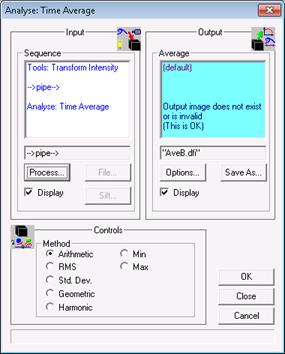

(§5.6.1.6), Tools: Transform Intensity (§5.7.2) and Tools: Combine Images (§5.7.3).

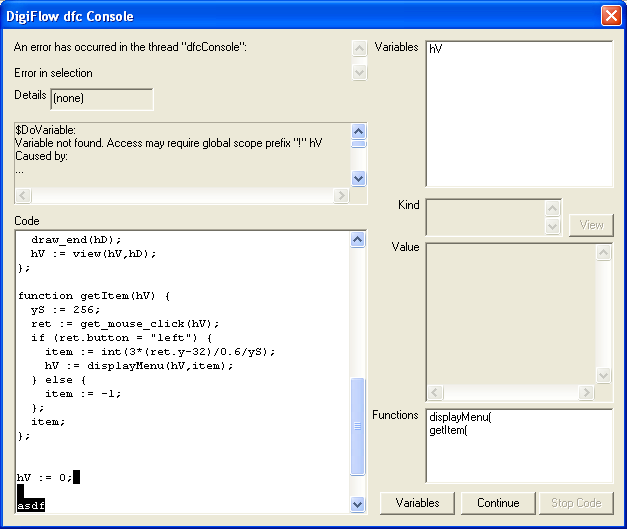

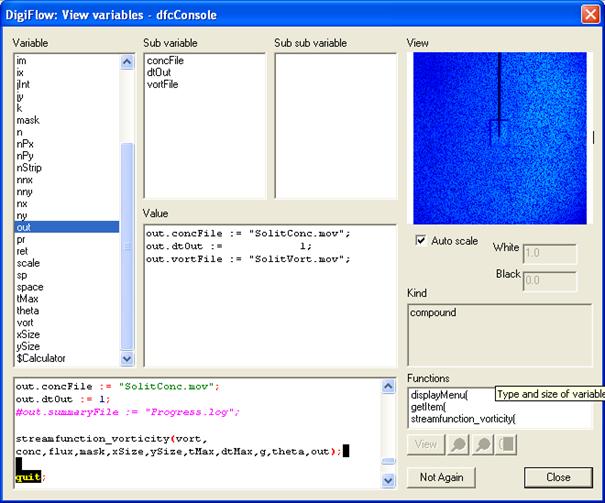

Details of the macro code itself are given in §§8

and 9. However, this manual gives only a relatively

brief introduction to a subset of the dfc functions available within DigiFlow.

Instead, the bulk of the documentation is provided within an interactive help

facility available from within DigiFlow itself in the Help: dfc Functions menu item, and from

the  button

within dialogs where such information is of value.

button

within dialogs where such information is of value.

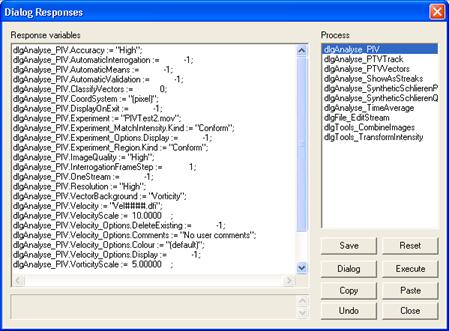

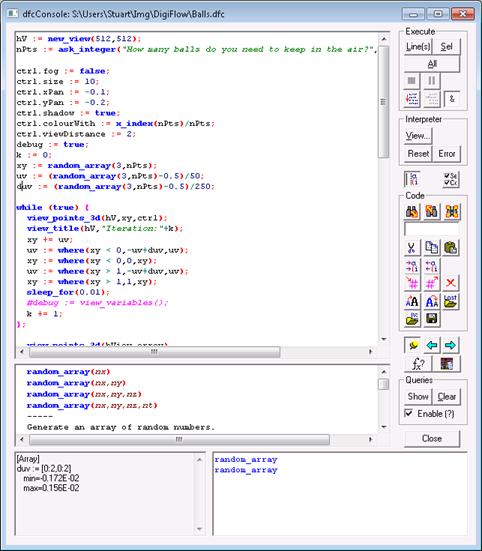

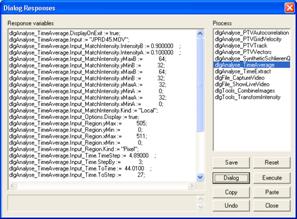

The help facility takes the form of the dialog illustrated

in figure 21. To find a function performing a given

task, simply type some information about that task into the Search for box. For example, if you want

to find functions that have something to do with drawing, enter “draw”. You will

notice that as you enter “draw”,

the Look up

list changes as each letter is typed. When you type the “d”, the size of the items in the list is

reduced so that it only includes those with a “d” somewhere in their names. Similarly,

“dr” leads to

a further reduction, excluding those that do not have this pattern, and so on.

Spaces in the Search for box are interpreted as “and” criteria for the

search. For example, entering “dr ma” would reduce the list to those functions with both

“dr” and “ma” in their names,

but without the two patterns needing to be adjacent. This, combined with the

logical and descriptive (if somewhat verbose) naming conventions for DigiFlow

functions, provides a very powerful search facility.

At all stages the Look up list is sorted alphabetically. (Note that if Search for is left

blank, then Look up

contains all possible functions.)

Selecting an item in the Look up list then brings up the

documentation for the function in the three boxes below. The top of these

identifies the role played by the entry within dfc code. The list box below gives the

range of possible entry points to the function. As we shall see later, many

DigiFlow functions are “overloaded” (i.e. they accept more than one type

of data), and may have optional parameters. This list itemises the full range

of possibilities. Selecting an entry point from this list and clicking the Copy button copies

this entry point into the clipboard.

The bottom control on the dialog provides the detailed

documentation for the selected function. This documentation should be read in

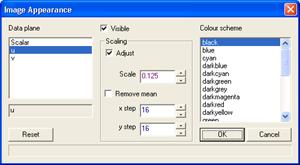

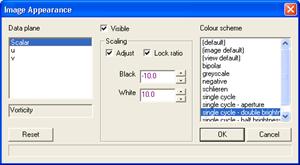

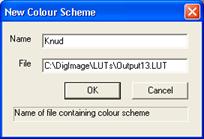

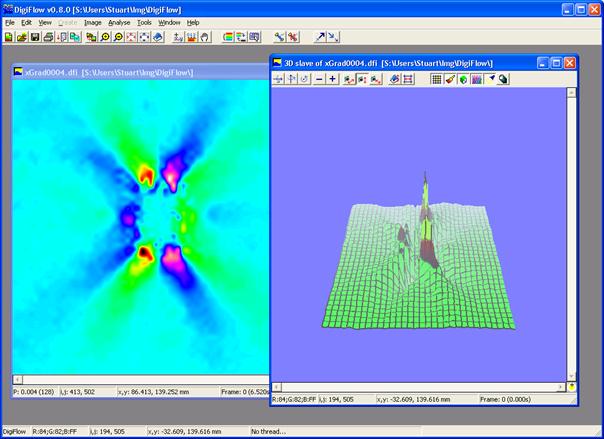

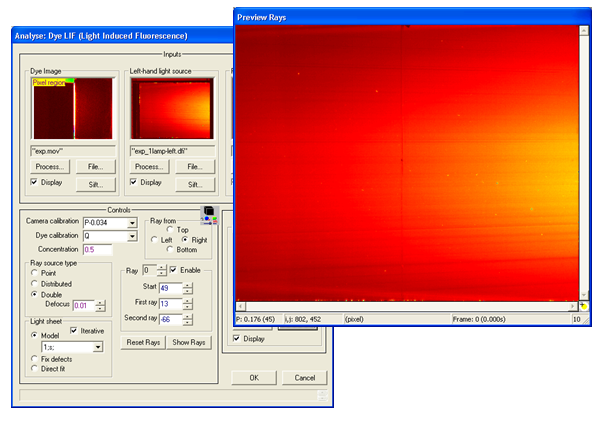

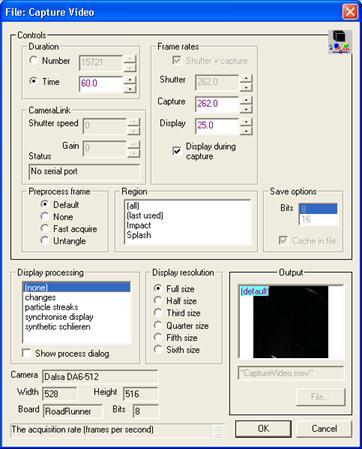

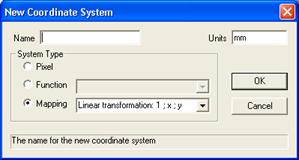

conjunction with the entry point documentation. The help system is hyperlinked